BLS has released the “preliminary benchmark” information for the Current Employment Statistics (CES) survey, the source of monthly information on jobs.

You know what a bench is

and you know what a mark is,

but what pray tell is a benchmark? And what does this preliminary benchmark tell us?

So as not to bury the lead, I’ll let you know that this year’s preliminary estimate of the benchmark revision is a bit bigger than it has been in the last few years. Our preliminary estimate indicates a downward adjustment to March 2019 total nonfarm employment of 501,000. Still, that estimated revision is only -0.3 percent of nonfarm employment. In most years our monthly employment survey has done a good job at estimating the total number of payroll jobs. More details on that below. This year our survey estimates are off more than we would like. Our goal is to provide estimates that are excellent and not just good or pretty good, and that’s why we benchmark the survey data each year.

What is benchmarking and why do we do it?

The CES is a monthly survey of approximately 142,000 businesses and government agencies composed of approximately 689,000 individual worksites. As with all sample-based surveys, CES estimates are subject to sampling error. This means that while we work hard to ensure those 689,000 worksites represent all 10 million worksites in the country, sometimes our sample may not perfectly reflect all worksites. So the monthly CES estimates aren’t exactly the same as if we had counted employment from all 10 million worksites each month. To fix this problem, we “benchmark” the CES data to an actual count of all employees, information that’s only available several months after the initial CES data are published.

In essence, we produce employment information really quickly from a sample of employers, then anchor that information to a complete count of employment once a year.

The primary source of the CES sample is the BLS Quarterly Census of Employment and Wages (QCEW) program, which collects employment and wage data from states’ unemployment insurance tax systems. This is also the main source of the complete count of employment used in the benchmark process. QCEW data are typically available about 5 months after the end of each quarter.

Each year, we re-anchor the sample-based employment estimates to these full population counts for March of the prior year. This process—which we call benchmarking—improves the accuracy of the CES data. That’s because the population counts are not subject to the sampling and modeling errors that may occur with the CES monthly estimates. Since the CES data are re-anchored to March of the last year, CES estimates are typically revised from April of the year prior up to the March benchmark. Then estimates from the benchmark forward to December are revised to reflect the new March employment level.

We will publish the final benchmark revision in February 2020 and will incorporate revisions to data from April 2018 to December 2019. (Thus, we’re not showing a 2019 number in graph and table below). On August 21, BLS released a first look at what this revision will be—what we call the “preliminary benchmark.” This preliminary benchmark gives us an idea of what the revised nonfarm employment estimates for March 2019 will be.

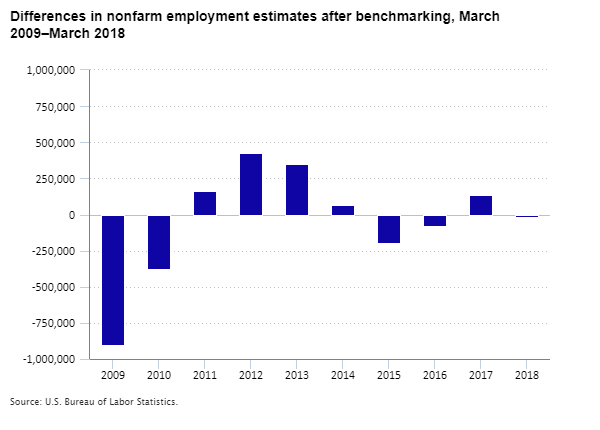

The size of the national benchmark revision is a measure of the accuracy of the CES estimates, and we take pride that these revisions are typically small.

For total employment nationwide, the absolute annual benchmark revision has averaged about 0.2 percent over the past decade, with a range from −0.7 percent to +0.3 percent.

The following table shows the total payroll employment estimated from the CES before and after the benchmark over the past 10 years. For example, pre-benchmark employment for 2018 was 147.4 million; post-benchmark employment was also 147.4 million.

| Year | Level before benchmark | Level after benchmark | Difference | Percent difference |

|---|---|---|---|---|

| 2009 | 132,077,000 | 131,175,000 | -902,000 | –0.7 |

| 2010 | 128,958,000 | 128,584,000 | -374,000 | –0.3 |

| 2011 | 129,899,000 | 130,061,000 | 162,000 | 0.1 |

| 2012 | 132,081,000 | 132,505,000 | 424,000 | 0.3 |

| 2013 | 134,570,000 | 134,917,000 | 347,000 | 0.3 |

| 2014 | 137,147,000 | 137,214,000 | 67,000 | <0.05 |

| 2015 | 140,298,000 | 140,099,000 | -199,000 | –0.1 |

| 2016 | 142,895,000 | 142,814,000 | -81,000 | –0.1 |

| 2017 | 144,940,000 | 145,078,000 | 138,000 | 0.1 |

| 2018 | 147,384,000 | 147,368,000 | -16,000 | <-0.05 |

The 2019 preliminary benchmark revision is following the same pattern, with an estimated difference of -0.3 percent. We provide this first look at the benchmark revision to give data users a sense of what we are seeing in the data. The final benchmark may be a little different—could be higher, could be lower. But based on recent experience, we are confident the benchmark released next February will show only a moderate difference from what we’ve been publishing each month and will validate the accuracy of our monthly CES estimates.

Want to know more? See our Current Employment Statistics webpage, send us an email, or call (202) 691-6555.

United States Department of Labor

United States Department of Labor