An official website of the United States government

United States Department of Labor

United States Department of Labor

Contract No: GS-00F-0078M

The Bureau of Labor Statistics (BLS) of the U.S. Department of Labor is the principal Federal statistical agency responsible for measuring labor market activity, working conditions, and price changes in the economy. Its mission is to collect, analyze, and disseminate essential economic information to support public and private decision-making.[1]

Private and public decisions related to labor markets and working conditions are increasingly being influenced by technological considerations. Spurred by a wave of technological developments related to digitization, artificial intelligence (AI), and automation, governments around the world have declared that the creation and deployment of these technologies present both important opportunities and challenges to their citizens.

Having better data related to the labor market and automation technologies could go a long way in helping address the concerns raised by technology. With these issues in the background, the BLS commissioned this report to identify constructs that would complement existing BLS products with a goal of ensuring that the necessary data exist that would allow stakeholders to assess the impact of automation on labor outcomes.

In the first section of this report, we review the social science literature on the effects of automation and related technologies on the labor force and identify some of the key theoretical constructs used in the scholarly literature.[2] In that section, we find some sources of agreement in the literature and highlight advances in our understanding of the topic. However, the section also underscores that there remains considerable uncertainty about how technology has affected the labor market in recent history and a great deal of uncertainty about the short- and long-term effects in the future. Many of the most influential papers in the field draw upon data sources and methods that have considerable limitations.

In the second section of this report, we review what international and domestic statistical agencies are doing to measure the key theoretical constructs linked to the labor market effects of technology.[3] We describe several areas where data gaps are large relative to the theoretical importance of the constructs.

This report concludes with an examination of the key constructs with a view to filling the data gaps identified. The aim is to present options that the BLS should consider in meeting the agency’s mission to further the understanding of how technology is affecting and is likely to affect the labor market. Many of the constructs identified are closely related to data that the BLS already collects, and so the final section looks to leverage existing sources as much as possible. In an attempt to create fully developed data collection strategies, we examined publicly available data on the costs of operating various BLS surveys to provide a qualitative assessment of how each option may broadly impact BLS costs.[4]

The primary lesson learned from this report is that researchers and, by extension, policymakers lack the data necessary to fully understand how new technologies impact the labor market. No individual agency or statistical system, in the U.S. or abroad, has developed a comprehensive approach to collecting data on all key constructs needed to assess the impact of AI, automation, and digitization on labor outcomes. These agencies face the challenge of measuring rapidly evolving technologies, as well as the difficulty of parsing a fragmented literature that has not hitherto provided clear guidance on what data are needed. However, by fully reviewing existing research and data collection efforts, this report takes an initial step at bridging this crucial divide and helping the BLS become a leader in this domain.

The concept of technology is at the heart of macroeconomic analysis. In standard macroeconomic growth models, labor and capital are the key factors of production that generate economic value (Jones 2016). Basic macroeconomic accounting subtracts the value of these measurable factors (the cost of labor and capital) from Gross Domestic Product (GDP) and describes the residual as productivity growth. In these neoclassical models, this residual productivity growth is the only long-term driver of higher living standards, and it is commonly referred to as “technology.” In the simplest versions of this framework, technology makes labor more productive and results in higher average wages and purchasing power. As this review will discuss, scholars have deepened and complicated this framework in recent years, but a unifying theme is that technology is closely linked to productivity growth.

Aggregate productivity growth has historically led to wage growth, but there are theoretical reasons why this may not hold in the future. One possibility is that an increasingly large share of GDP (or productivity gains) could go to capital instead of labor, rewarding investors but not workers. Secondly, even if some share of productivity gains goes to workers, the benefits could be unevenly distributed by level of skill or type of tasks performed. This review will discuss how economists have tried to assess the plausibility of these and related scenarios.

Since technology is so closely related to productivity, the review starts with how economists have interpreted productivity growth trends and how they relate to technological change. In the 18th and 19th centuries, technologies associated with the Industrial Revolution dramatically reduced the costs of producing food, clothing, and other goods—and through recording devices, radio, film, television, planes, and automobiles, the costs of communication and transportation. Gordon (2017) found that the most economically important innovations occurred from 1870 to 1970, a period associated with very rapid growth. Since then, he posited, productivity growth has slowed because digital technologies are fundamentally less economically important than those that preceded them, and indeed productivity growth has slowed across advanced industrial economies since the 1980s. For example, in the United States, productivity grew at a rate of 2.8% on an annual average basis between 1947 and 1973, but since then, it has been much slower, with the exception of the 2000 to 2007 period. From 2007 to 2017, average annual productivity growth was 1.3% (Bureau of Labor Statistics 2019a). Based on these considerations and related analysis, Gordon (2017) concluded that new technologies are having little impact on the economy and hence the labor market.

Cowen (2011) has advanced a similar argument that previous technological advances were far more impactful than recent ones. Atkinson and Wu (2017) provided empirical evidence on this point by showing that recent decades have resulted in lower rates of creation and destruction of new occupations relative to previous eras in economic history.

From the point of view of these scholars, the latest wave of advanced technologies (i.e., digital technology, artificial intelligence (AI), and automation) is unlikely to affect labor markets nearly as much as the technological changes of prior generations.

However, other economists and scholars have reached what could be described as the opposite conclusion—arguing that new technologies have already started to profoundly transform the labor market and will likely accelerate in their effects. Klaus Schwab (2016), founder and executive chairman of the World Economic Forum, has gone as far as to label the current period of technological advancement the Fourth Industrial Revolution, emphasizing the rapid pace of change. Consistent with Schwab’s (2016) conceptualization, Gill Pratt (2015), who formerly managed a robotics program for Defense Advanced Research Projects Agency, compared the latest wave of technologies to the Industrial Revolution, and wrote: “[T]his time may be different. When robot capabilities evolve very rapidly, robots may displace a much greater proportion of the workforce in a much shorter time than previous waves of technology. Increased robot capabilities will lower the value of human labor in many sectors.” Pratt listed several key advances he believes are driving technological changes: growth in computing performance, innovations in computer-aided manufacturing tools, energy storage and efficiency, wireless communications, internet access, and data storage. Brynjolfsson and McAfee (2014) have advanced similar arguments and claimed that information technology inhibited job creation after the Great Recession and is leading to income inequality and reduced labor demand for workers without technical expertise. Responding to arguments from those who see a slowing pace of innovation as the explanation for reducing productivity growth, they state: “We think it’s because the pace has sped up so much that it’s left a lot of people behind. Many workers, in short, are losing the race against the machine.”

Defenders of this high-impact view of technology are left with how to reconcile it with the observed slowdown in productivity growth. Brynjolfsson, Rock, and Syverson acknowledged the lack of strong growth in macroeconomic productivity data but argued that this is a measurement challenge associated with general purpose technologies like AI, which they indicated, require significant complementary investments that are slow to reveal themselves as productivity advances. The argument draws on David (1990), who listed a number of reasons why computer and information-processing technology may require a long lead time before showing up in measured productivity statistics—including diffusion lags, measurement error, the slow depreciation of previous technologies, and the complexity of organizational restructuring.

A related point is found in Bessen (2015), who, in writing about machines of the Industrial Revolution, indicated that new inventions are slow to deploy because they require significant practical refinements and investments in human capital to be competitive with existing practices. However, new analysis of historical data suggests that new technologies, such as software and industrial robots, did have a significant impact on labor markets. Webb (2019) finds, using the text of both job task descriptions and patents to construct a measure of the exposure of tasks to automation, that occupations that were highly exposed to previous automation technologies saw large declines in employment and wages over the relevant periods.

Yet, with respect to computers and other information technology, the most significant advances were arguably made decades ago as Gordon (2017) has argued, and the technologies have already been widely adopted without dramatically affecting productivity trends. Detailed efforts by Byrne, Fernald, and Reinsdorf (2016) to examine the measurement challenges related to new technology found that even aggressive assumptions about missing data or poorly measured gains yield only small changes in productivity growth statistics.

Acemoglu and Restrepo (2019) provide an alternative response to this conundrum that bridges the two points of view. They argue that automation creates two effects that raise the demand for labor: a productivity effect that expands the demand for labor by making labor more efficient in the tasks left to it after automation (e.g., lawyers can compose briefs more efficiently using the internet and writing software) and a reinstatement effect that creates new demand for labor as automation creates new tasks (e.g., developing software, managing information networks). Automation also creates a displacement effect that substitutes for labor (e.g., online airline and hotel booking platforms reduce demand for travel agents). The net effect on labor depends on the combination of these three effects, which vary by technology. For weak technologies that are only modestly productivity-enhancing and don’t generate new tasks, the displacement effect can dominate the productivity effect. One example Acemoglu and Restrepo (2019) provide is the automation of call processing centers. Humans tend to be better at solving customer problems than automated voice recognition software but less efficient on net because of higher costs. If this scenario is indicative, automation could erode the labor share of GDP without generating significant economic growth.

Other scholars who characterize the potential of new technologies as high impact sidestep the issue of productivity decline by pointing out that the effects are coming in the near or not-too-distant future. In influential research, Frey and Osborne (2017) predicted that 47% of U.S. workers are at risk of having their jobs computerized. Subsequent studies by Organisation for Economic Co-operation and Development (OECD) economists have put the figure considerably lower using more detailed information on how tasks vary by occupation (Arntz, Gregory, and Zierahn 2016).

Overall, even at the highest conceptual level, economists and scholars remain divided as to the effects of new technology on the macroeconomy and the labor market. Moreover, this literature has not yet been well integrated with the potential effects of other macroeconomic changes such as immigration, trade, and demographic patterns, all of which put additional constraints and pressures on the labor market (Schwab 2016). The remainder of this review seeks to clarify the key issues of the theoretical debate, including how technologies will affect the distribution of income between workers of different occupations or of different education levels, and how technologies will affect the returns to capital compared to labor. The review will also examine efforts to resolve these issues in empirical work and point to the key constructs that will need to be addressed.

Automation is the broadest definition of new technologies, and thus will serve as the main focus for this discussion.

Automation is defined as the substitution of non-human value for human production value. Following Acemoglu and Restrepo (2019), we define automation as “development and adoption of new technologies that enable capital to be substituted for labor in a range of tasks” (p.3).

Said otherwise, it refers to any instance where capital replaces labor as the sources of value in the chain of production. The production of any good or service can be defined as the performance of specific tasks, each of which, in theory—though not reality—can be performed by a human or something else—such as a calculator, computer, algorithm, or machine. The automated component is simply the source of value-added that is not performed directly by humans.

To take an intuitive example, a vending machine performs the specific tasks of registering a customer’s request for a drink or snack, processing payment, and dispensing the product. It does not, however, grow the ingredients, harvest and process the ingredients, design, manufacture, or market the final product, transport the components or final product, install or replace the product, repair or maintain itself, or protect itself from theft, though those are all valuable tasks in the value-chain for delivering a candy bar to a customer from a vending machine.

This review will discuss automation with respect to the Industrial Revolution’s technologies but will focus on technologies introduced in recent decades and associated with computers and computer-controlled machines. AI, machine learning, and digitization are specific manifestations of automation.

Digitization refers to the translation of information into a form that can be understood by computer software and transmitted via the internet (Goldfarb and Tucker 2019). The closely related concept of “digitalization” encompasses this meaning of digitization but is used more broadly to refer to the diffusion of digital technologies (technologies that process or transmit digital information) into business operations and the economy (Muro et al. 2017; Charbonneau, Evans, Sarker, and Suchanek 2017). Many of the aforementioned technological changes described by Pratt (2015) are relevant to the growing importance of digitization, especially internet access and speed, wireless communication, processing speed, and data storage efficiency. Taken together, these changes have encouraged automation. Many services once performed by humans—like the trading of financial assets, banking, accounting, processing orders for food and retail goods, the coordination of transportation, creating and confirming reservations at restaurants or accommodations, searching publications and media content, and monitoring energy usage—are now routinely handled by software as a result of digitization.

In Goldfarb and Tucker’s (2019) review of the literature on how digital technologies shape economic activity, they conclude that digital technologies lower the costs of five important economic activities: 1) search for specialized labor or products, 2) replication, reproduction, and copying, 3) acquiring or sharing goods and information, 4) “tracking” or identifying individuals and their preferences, and 5) verification or assessing the quality of products and services. These activities could be broadly classified as the transmission of information.

When applied to the labor market, their review suggests a number of consequences, which will be addressed in more detail subsequently. The effect on search costs should facilitate the specialization of labor and allow for specialized niche producers; the lowering of reproduction costs creates ambiguous effects for individuals and companies that own digitized intellectual property (e.g., video, literary, music). As long as they can enforce their ownership, it may increase profit margins because they can scale up distribution with relatively little cost; however, using that distribution channel also opens them up to more intense competition. The falling cost of obtaining and sharing information could increase the value of workers who deal in specialized knowledge or subject matter expertise, as it would allow them to acquire data and interpret data more readily, via consulting services, for example. The falling cost of search, information, tracking, and verification has already increased the demand for application developers, website developers, and computer programmers whose skills are needed to allow individuals and companies to participate in the growing digital economy and take advantage of the trends mentioned.

There is no consensus in the economics or computer science literature as to what is exactly meant by “artificial intelligence.” From our perspective, AI is a subset of automation technologies that is distinct from digitalization but may utilize digitization technologies. We define AI as the automation of cognitive tasks that are part of the production chain; we distinguish cognitive tasks from those that involve only the manipulation of physical objects. In this sense, trucking services do not presently use AI in the truck itself, but a driverless truck would, since driving is a cognitive task whereas transportation itself is not. This is consistent with a more general definition offered by an expert committee on AI, which defined it as “that activity devoted to making machines intelligent” (Stone et al. 2016, p.12).

The expansion of automation into cognitive tasks through AI raises questions about the feasibility of automating tasks that were previously thought to be non-automatable. Famous recent examples of AI include IBM’s creation of Deep Blue and Watson, algorithms that defeated champions in chess and the game show Jeopardy, respectively. Other examples include the development of AlphaGo by DeepMind, a Google-acquired company, which created an algorithm that could defeat the leading Go champion, and Siri, acquired by Apple, which developed sophisticated voice-recognition software.

Agrawal, Gans, and Goldfarb (2019) examine the economics literature on AI and argue that the important advances in AI could be characterized as machine learning and essentially involve prediction. The argument is that the recent advances in AI have been mostly limited to machine learnings, and machine learning entails prediction (as in estimating an outcome based on measurable variables or features), using techniques such as maximum likelihood, neural networks, and reinforcement learning. Agrawal, Gans, and Goldfarb (2019) indicate that the economic effects of machine learning on labor are varied, complex, and not well understood at this point. Some predictions may replace human decision-making and labor, but many others complement human labor and make it more efficient. They summarize their conclusion by stating:

“Overall, we cannot assess the net effect of artificial intelligence on labor, even in the short run. Instead, most applications of artificial intelligence have multiple forces that impact jobs, both increasing and decreasing the demand for labor. The net effect is an empirical question and will vary across applications and industries” (Agrawal, Gans, and Goldfarb 2019, p.34).

Other scholars have a more expansive interpretation of what has been accomplished in AI (Brynjolfsson and McAfee 2014; Ford 2015; Stone et al. 2016). A committee of inter-disciplinary scholars, including economist Erik Brynjolfsson, have written more broadly about the tasks that can be performed by AI, including object and activity recognition, language translation, and robotics (Stone et al. 2016). They claim that AI has mostly affected workers performing routine tasks in the middle of the wage distribution, but that AI is likely to gradually automate cognitive tasks that are not currently considered routine, which may include, for example, legal case searches performed by first-year lawyers. In summary, they speculate that “AI is poised to replace people in certain kinds of jobs … However, in many realms, AI will likely replace tasks rather than jobs in the near term, and will also create new jobs … AI will also lower the costs of many goods and services, effectively making everyone better off” (Stone et al. 2016 p.8).

Modern macroeconomic growth theory generally posits a positive relationship between productivity and wage growth. The underlying implication is that technological change—which is generally regarded as the fundamental cause of growth in neoclassical models—should improve the real incomes of workers. This section will review historic patterns and theoretical conditions when this basic prediction may hold or fail, depending on factors like the extent to which new technology displaces work and rewards capital over labor or is skill-biased. After briefly discussing the Industrial Revolution, we discuss the literature on skill-biased technological change, followed by the introduction of task-based frameworks, and the application of task‑based frameworks to automation.

In the midst of the Industrial Revolution, Karl Marx (1867) famously stated that the accumulation of capital led to the impoverishment of laborers. His argument was that investment in machines was both labor‑displacing and labor-complementing. He believed business owners invest in labor-saving machines when wages get too high, thus creating a “reserve army of labor” that would bid wages back down. Yet, the expansion of production also required workers. As he worded it: “Capital works on both sides at the same time. If its accumulation, on the one hand, increases the demand for labour, it increases on the other the supply of labourers by the ‘setting free’ of them” (Marx 1867, sect. 3, last para.).

Economic historians have since rejected Marx’s prediction that the real wages of workers would remain stagnant in modern economies. As Keynes (1978) predicted, living standards have increased considerably and unemployment resulting from technological process proved to be only temporary. There is no debate among economists that living standards are dramatically higher now than in the 19th century in rich countries. Not only is the purchasing power of income orders of magnitude higher, but ordinary people, workers, and business owners enjoy far greater health and longevity (Deaton 2016). Marx’s predictions were also strikingly inaccurate even during his own era. Data from Gregory Clark’s (2005) research on the Industrial Revolution shows that the wages of workers rose rapidly in England. In fact, from 1850 to 1900, real wages of building workers doubled in England as capital accumulation and education increased.

More detailed accounts of specific sectors on the cutting edge of new technologies reveal similar dynamics of rising wages and living standards for workers, as new technologies diffused. Economic historian James Bessen calculated the real hourly wages for weavers and spinners, roles that were using cutting-edge technologies in factory settings. From 1830 to 1860, these wages remained relatively stagnant, but grew rapidly from 1860 to 1890. Bessen’s (2015) explanation was that labor markets were relatively uncompetitive during the earlier phase, and workers had fewer alternative sources of employment (consistent with Marx’s perspective), but as technological change expanded economic growth and created new sources of employment, even workers with modest skills, like spinners, saw their wages increase, and those with more specialized technical skills—weavers—benefited disproportionately.

Aside from average wage patterns, economists are also interested in understanding the consequences of technological innovation on the income distribution. Income inequality fell dramatically for England following the Industrial Revolution, as documented by Clark (2008) and Lindert (1986). In the U.S., Lindert and Williamson (2016) found that income inequality rose for much of the 19th century (from 1800 to 1860) from a low start, plateaued until around 1910 and declined sharply thereafter until the 1970s. This is consistent with evidence from Goldin and Katz (2010) that the wages of high-school educated workers grew more rapidly than the wages of college-educated workers from 1915 to 1980. Piketty, Saez, and Zucman (2017) found the same broad reduction in income inequality as measured by the share of national income held by the top 1% of earners, which fell from 20% to 10% from 1930 to 1980 (World Inequality Database). This was a period of rapid innovation and productivity growth. A major focus of the economics literature in recent years has been devoted to explaining why income inequality started rising again around 1980.

Economic historians have also examined and debated to what extent the technologies of the first and second waves of the Industrial Revolution could be regarded as leading to an increase or decrease in the demand for skills. More formally, scholars have examined whether or not technology is complementary with skilled labor.

Within manufacturing and production work, Attewell (1992) reviewed the literature on the “deskilling” and skill upgrading effects of technology and concluded that the evidence of systematic deskilling is mixed, though there are many specific examples of it occurring. Attewell (1992) found thin evidence for one interpretation of the deskilling theory: investment in machines in order to reduce reliance on skilled workers. Instead, he refers to many instances of workers developing advanced skills in relation to the use and maintenance of the new machines. Consequently, with respect to the early to mid-20th century, Attewell (1992) noted many examples of new skills emerging in response to technological demands placed on production workers, even when the technologies performed tasks that required high levels of training and skills. Across the economy at large, Attewell (1992) noted a stronger consensus across studies that technology led to a higher demand for skills, as shown, for example, by the secular rise in educational attainment.

Some scholars, such as Goldin and Sokoloff (1982) and Humphries (2013) interpreted the heavy reliance of women and children during the mid-19th century as evidence that industrial machinery disproportionately increased demand for low-skilled entry-level work and was thus deskilling. Nuvolari (2002) identified the deskilling effects of technology as one of the motivations for political resistance to factory work in England during the 18th and 19th centuries. Likewise, De Plejit and Weisdorf (2017) identified a large shift in the share of occupations from semi-skilled to unskilled (using early 20th century categorizations), with only a small shift toward high-skilled workers. By the early 20th century, however, technology and skill became complementary, according to Goldin and Katz (1998), who found a positive relationship between the skill level of blue-collar workers and investment in capital per worker from 1909 to 1940. Their interpretation of the evidence posits the early 20th century as a turning point for skill-technology complementarity.

One complication to this debate is how skill is defined. Disputing the deskilling literature, Bessen (2015) used worker-level microdata to show that employers invested in training for female workers during the 19th century, and they became far more productive with experience. Moreover, as production became more mechanized, the remaining non-automated tasks demanded higher rather than lower levels of skill, as evidenced by the rising positive relationship between on-the-job learning and worker productivity. Bessen (2011) interpreted this and related evidence as indicating that early industrial technologies required and rewarded high levels of skill that could be acquired on-the-job but did not require formal education.

Well-established literature in labor economics holds that technology has increased the productivity of workers with college education more so than workers with less education. This fact explains the rise of earnings for college-educated workers relative to the earnings of non-college-educated workers, despite the increase in the labor supply of college-educated workers.

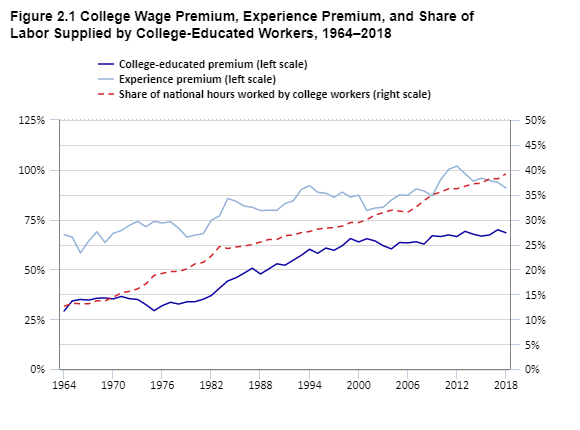

The basic empirical facts for this theory are illustrated in Figure 2.1. Starting around 1980, the relative incomes of college-educated workers have increased relative to workers with a high school education, adjusting for other obvious observable factors. This is known as the college earnings premium, and it has increased from 34% in 1980 to 68% 2018. A surprising aspect of this rising premium is that the share of hours worked by college-educated workers has nearly doubled from 20% in 1979 to 39% in 2018. In a simple supply-demand framework, this suggests that demand for college-educated workers has outpaced the steady increase in supply.

Source and Note: Author analysis of IPUMS-CPS. Premium is calculated by year-specific regression of log of total personal income on a categorical variable for having at least four years of college relative to high school (which is the reference category), a binary variable for less than high school, a binary variable for some college but less than four years, gender, the number of hours worked last week, 10 age categories in roughly five-year bands, with workers aged 20-24 in the reference group. Experience premium is coefficient on age category of workers aged 50-54 (the highest across all years) relative to workers aged 20-24. Population is restricted to workers under the age of 65. The share of national hours worked by college-educated workers is the sum of hours worked per year (assuming hours worked per week is consistent across 52 weeks of the year) for college-educated workers divided by the total number of hours worked.

This literature has a long history, with many scholars contributing to theoretical and empirical studies. Among the seminal papers on this is work from Katz and Murphy (1992), who found that the rising college earnings premium could be linked to evidence of rising relative demand within industries for college‑educated workers. In explaining the college premium over an even longer period, Goldin and Katz (2010) emphasized a slowdown in the growth rate of the supply of college-educated workers, in the midst of stronger increases in demand, as being primarily responsible for the rising premium since 1980.

Other research makes a more direct link between new technologies and changes in the wage structure. Krueger (1993) found that use of computers was associated with more highly educated workers and predicted higher earnings levels and growth. Closely related work by Autor, Katz, and Krueger (1998) found further evidence that industries that have invested more heavily in computers exhibited a sharper rise in demand for educated workers. Michaels, Natraj, and Van Reenen (2014) further demonstrated that investment in information and computer technology and technology-related research and development can account for up to a quarter of the growth in the college earnings premium and demand for educated labor.

While the theory has been influential, not all economists agree with this interpretation of the college premium or its larger implication in establishing skill-biased technological change. DiNardo and Pischke (1997) ran what might be considered a placebo test using a similar analysis as that performed by Krueger (1993). They found that the labor market returns to pencil use show similar properties as the return to computer use, despite the fact that nearly every worker could, in principle, use a pencil. They caution against interpreting the computer wage premium as suggesting that computer skills specifically have led to higher wages. Rather, they suggest that selection bias—more skilled workers may be more likely to use both a computer and a pencil—is a likely possibility. If so, skill-biased technological change may simply be observing a rising relative demand for education that is unrelated to technology—or at least any direct effect of technology on the productivity of college-educated workers.

Card and DiNardo (2002) also conveyed skepticism of the skill-biased technological change literature, pointing out that the framework relies entirely on supply, demand, and technology, ignoring well-established sources of variation in earnings, such as unions, efficiency wage premiums, minimum wage laws, and economic rents. Empirically, the theory also runs into various problems, they argued, in that wage inequality narrowed during the 1990s even as information technology deepened in its diffusion and adoption by every conventional measure. They also reviewed complications in applying the skill-biased technology framework to patterns within age, race, and gender groups, where the college wage premium plays out differently in ways that are inconsistent with computer or technology use. Lemieux (2006) also documented empirical challenges to the framework and related rising variance in wages to demographic changes in the workforce.

Bessen’s (2015) work on the Industrial Revolution analyzed the complex relationship between technology, formal education, and skill (which comes from a mix of formal education, training, and experience). In Bessen’s account, new technologies with broad applications—like information technology but also many of the machines of the Industrial Revolution—create demand for workers with high levels of cognitive ability, as measured by their ability to rapidly learn and master new skills. Thus, the earliest workers using weaving machines were far more literate (or educated) than the general population, even though literacy was not needed to use the machine. This relationship creates an education premium, but ultimately, specific technical skills—not the sort of education acquired through college—are needed to adopt and make use of new technologies. When the technical skills became standardized, formal education became less valuable, and factory workers gradually became less literate. As Bessen wrote:

“Thus, while demand for college graduates has grown in relative terms, it appears to be mainly because college-educated workers are better at learning new, unstandardized skills on the job, not because their college education conferred specific technical skills … As technical knowledge in these occupations becomes increasingly standardized, more and more workers will be able to acquire the needed skills without a college education, in formal training provided by employers or vocational and technical skills” (Bessen 2015, p.145-146).

Despite, the decline in formal education (literacy), Bessen (2015) found that skill was highly rewarded during the industrial revolution. Weavers were paid based on how many pieces of cloth they produced, and employers and employees invested heavily in on-the-job-training. He found that more experienced (and hence skilled) workers produced substantially more cloth per hour.

Consistent with Bessen’s (2015) theory that technological change tends to reward informal skills, the premium for experience has also increased since 1980 and, while difficult to compare, is larger than the college premium as measured here. Figure 2.1 measures the experience premium as the difference in log income between workers aged 50–54 compared to workers aged 20–24. That premium increased from roughly 67% in 1980 to 91% in 2018.

The results of a firm-level study from Bresnahan, Brynjolfsson, and Hitt (2002) are relevant to Bessen’s (2015) interpretation. They found evidence that firm investments in information technology increase worker autonomy and the use of smaller teams, which also coincided with higher demand for skilled workers. In this way, the adoption of technology has rewarded skilled workers, individuals whom managers trust to be more autonomous.

From another point of view, the evolution of the income distribution in the U.S. has raised theoretical concerns. In light of work by Thomas Piketty and collaborators (2015), the rise of income shares going to the top 1% or top 10% of income earners has been difficult to reconcile with a skill-biased technological change framework. In an era when roughly one-third of workers have a college degree, it is not obvious why most of the gains would have gone to the top 1% to 10%. One potential solution is to appeal to the increasing importance of capital income, which may accrue to the owners of technology-producing companies, but that would not account for the fact that labor income (rather than capital income) drove rising levels of inequality from 1980 to 2000, as measured by top 1% shares.

Consistent with the basic tenets of capital-skill complementarity and skill-biased technological change, a skill premium, whereby advancing technology favors relative demand for highly skilled workers, has been evidenced as a widespread global trend—from India (Berman, Somanathan, and Tan 2003) to Hungary (Kezdi 2002), in low- and middle-income countries (Conte and Vivarelli 2011), and in a range of both developed and developing countries (Burnstein and Vogel 2010). However, technology advancement is not the only relevant factor: Imported skill-enhancing technology (Conte and Vivarelli 2011), foreign investment (Kezdi 2002), purchase of foreign machinery for technological progress (Gorg and Strobl 2002), and trade and multinational production (Burnstein and Vogel 2010) all appear to contribute to the skill premium. For example, Burnstein and Vogel (2010) identified that U.S. trade and multinational production between 1966 and 2006 accounts for one-ninth of the 24% rise in the U.S. skill premium. In their model, multinational production appears to be more influential: The rise in the skill premium caused by trade is 1.8% in the U.S. (and other skill-abundant countries) and 2.9% in skill-scarce countries, whereas, considering multinational production, the rise in the skill premium is 4.8% in the U.S. (and other skill-abundant countries) and 6.5% in the skill-scarce countries.

In a review and critique of the skill-biased technological change literature, Acemoglu and Autor (2011) described two important features of these models. One is that skills are typically thought of (or at least measured) as entirely determined by college education. Second, technology is modeled as factor augmenting—meaning it makes skilled workers more productive but does not replace labor. Drawing further implications, the canonical model suggests technological change increases the wages of high- and low-skilled groups. Thus, the skill framework cannot explain why wages for low-skilled workers might experience real decline, unless relative supply increases. Since the relative supply of low-skilled workers has fallen (see Figure 2.1), this framework does not explain why real wages for low-skilled workers have declined or been stagnant, depending on the measures used.

Acemoglu and Autor (2011) proposed a task-based framework where tasks are defined as units of work activity that produce output and skills refer to the ability to perform tasks, which may or may not be related to college education. In this view, occupations are “bundles of tasks.” Looking at it from this richer framework confers several advantages, including the ability to understand occupational growth patterns that differ within education levels and the capacity to model machines as potential substitutes for work—rather than only labor-augmenting. Still, this framework does not imply that machines always replace human workers. Rather, a machine may perform some aspect of an occupation—a specific task—while the human worker performs the other tasks associated with the occupation.

Several papers have used some version of this task-based framework to investigate how technology has affected the labor market. Autor, Levy, and Murnane (2003) argued that computers are especially efficient relative to labor at routine tasks, and the falling price of computers has lowered wages and demand for routine human labor while increasing relative compensation of non-routine labor. Autor and Price (2015) extended the earlier analysis and found additional evidence that the task content of U.S. labor has become less routine (measured as more analytical and interpersonal) from 1980 to 2009, whereas the largest losses in employment shares have come from routine cognitive work and manual labor. Atalay et al.’s (2017) findings confirmed the broad patterns of shifts toward non-routine labor using a database of job postings that they constructed from 1960 to 2000. Spitz-Oener (2006) analyzed how the task content of occupations has changed in western Germany and found a large shift within occupations toward non‑routine tasks and away from routine tasks. This change, she stated, explains a larger fraction of the shift toward more educated workers.

In thematically related work, Autor and Dorn (2013) ranked occupations by wages—which they used as a proxy for skill—and found that occupational growth was faster from 1980 to 2005 for high- and low-paying occupations relative to “middle-skilled” occupations, which would include many production occupations within the manufacturing sector and clerical jobs across a wide range of industries. This is referred to as job polarization. They posited that computers have displaced routine manual and cognitive tasks, but not low‑skilled services, which are often not routine. Other studies have found evidence consistent with this pattern for the U.S. (e.g., Autor, Katz, and Kearney 2006; Autor, Katz, and Kearney 2008; Autor and Dorn 2013; Holzer 2015). Goos and Manning (2007) and Goos, Manning, and Salomons (2014) found a similar polarizing pattern of job growth by occupation for Britain (from 1979 to 1999). Katz and Margo (2014) found evidence that the “hollowing out” pattern also described the U.S. labor market from 1850 to 1910.

Growth projection estimates suggest that these patterns may continue. Bughin et al. (2018) took a task‑based approach to project skill-demand shifts in hours worked per week from 2016 to 2030. According to their estimates, there will be an 11% decline for physical and manual skills and a 14% decline for basic cognitive skills (e.g., basic literacy). In contrast, they project a 9% increase for higher cognitive skills (e.g., creativity, problem-solving), a 26% increase for social and emotional skills, and a 60% increase in technological skills.

Similarly, an analysis of O*NET occupation skills and work activities for the 20 occupations projected to grow fastest between 2016 to 2030 (Bureau of Labor Statistics 2019b) revealed that 95% require advanced cognitive skills and 85% require socioemotional skills, whereas 65% require basic cognitive skills and only 15% require manual labor skills (Maese 2019). Among these fast-growing jobs, there are differences in skill demand based on median wage (using 2018 median wage): High-paying jobs are more likely to require advanced technical skills (e.g., data mining, network monitoring), to involve technological work tasks (e.g., analyzing data) and advanced cognitive work tasks (e.g., making decisions and solving problems, thinking creatively), and are less likely to require manual labor tasks (e.g., repairing and maintaining mechanical equipment) (Maese 2019).

The aforementioned papers on skill-biased technological change and task-based literature have either assumed technology increases labor demand or only threatens workers who perform routine tasks. More recent research broadens the role of technology to include the ability to perform any task; this meets the definition of automation described in Section 2.2.1.

The model introduced by Acemoglu and Restrepo (2019) describes three classes of technology: automation, new task generation, and factor-augmenting technologies (which increase the productivity of labor or capital in doing any task). A new technology may contain all or multiple aspects of these effects. An industrial machine might automate some assembly tasks performed by humans but create demand for new tasks in programming, installation, maintenance, and repair. It is less realistic to imagine technologies that make labor or capital better at any task, as the authors point out.

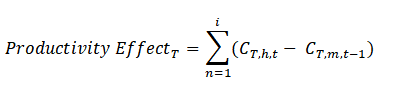

Acemoglu and Restrepo’s (2019) framework classifies the effects of these groups of technologies. When a technology automates tasks, it creates demand for labor through a productivity effect, reduces demand for labor through a displacement effect, and has ambiguous effects, depending on how it changes the composition of work done by industry. A technology might also increase the number of tasks performed in the economy, which reinstates demand for labor and creates a productivity effect.

In the empirical section of the paper, Acemoglu and Restrepo (2019) examine trends in U.S. data and distinguish between 1947 to 1987 and 1987 to 2017. In the earlier period, they measure a displacement effect from new technologies that amounted to 0.48% per year, which was offset by a reinstatement effect and strong productivity growth (2.4% per year). The net result was rising real wages (2.5% per year) and strong labor demand. In the period since 1987, wage growth has been much weaker (1.3% per year) as a result of weaker productivity growth (1.5% per year), a slowdown of the reinstatement effect (from 0.47% to 0.35% per year), and an acceleration of the displacement effect (from 0.48% to 0.70%). Using industry-year variation within the U.S., they find that the proxy measures for the use of automation and reliance on routine tasks within an industry predict larger displacement effects and smaller reinstatement effects. However, they also find that industries that rely more heavily on new occupations or occupations with new tasks have larger reinstatement effects.

These results are consistent with earlier empirical work from Acemoglu and Restrepo (2017) on industrial robots, one specific form of automation technology. Using data on robots by industry for the U.S.—while identifying variation using European-industry trends to reduce reverse causality—they found that labor force participation fell in the commuting zones most exposed to robots, where exposure runs via initial employment at the regional and industry level multiplied by an index of industry-specific increases in robots per worker.

Using similar data but a different modeling and empirical strategy, Borjas and Freeman (2019) contrast the labor competition effects of robots to the effects of immigration and find that robots have a greater impact. Specifically, they find that industries with robots displaced two to three workers per robot or three to four workers in the most exposed groups, such as workers who either have low levels of education or perform highly automated tasks (as defined by workers through their responses to an O*NET item on work context: “How automated is your current job?”). They do not find negative wage or labor displacement effects for college-educated workers or workers in jobs that are not automated. They suggest these effects have historically been small from a macroeconomic perspective because the number of robots is small, but the effects could become macroeconomically significant if robots become much more widely adopted.

Going beyond the U.S., other cross-country empirical work suggests the productivity and reinstatement effects have substantially outweighed the displacement effect—at least for industrial robots. Graetz and Michaels (2018) acquired data on the purchase of industrial robots by country and industry and conducted an analysis across 17 countries from 1993 to 2007. They modeled robots as perfect substitutes for certain human tasks and assumed companies adopt robots when the profits from doing so exceed the cost of purchasing the robots. Their empirical analysis concluded that the adoption of robots increased GDP per hour worked (or productivity) with no effect on labor demand in the affected industries. Presumably, labor demand would have increased in other industries. In other words, industries operating in countries that were especially prone to adopt robots did not experience job growth that was any different than job growth in industries and countries with low adoption rates. Graetz and Michaels (2018) found that robot adoption predicts wage growth and lower prices for consumers, but employment shifts from low-skilled workers to middle- and higher-skilled workers. They used several techniques to verify whether their analysis could be interpreted as a causal effect and found evidence that it is.

Caselli and Manning (2019) introduce an alternative theoretical model that also draws on a task-based framework and defines technology broadly to be any capital investment that reduces the direct or indirect costs of something purchased by consumers. They then lay out a series of parsimonious assumptions and work out the logical outcomes with respect to effects on average wages. They assume interest rates are not affected by technology, so that the supply of capital is not constrained. Next, they distinguish between investment goods and consumer goods. They reason that if the price of investment goods (e.g. machines) falls relative to consumer and intermediate goods, workers must benefit, though not necessarily all, and the returns to investment capital will fall (though not necessarily the capital-labor ratio). When they further assume that workers can seamlessly switch occupations and retrain, they reason that all workers stand to gain from technological change. In reality, workers typically face a modest wage penalty after experiencing a layoff even six years later, suggesting that transitions are not seamless (Couch and Placzek 2010).

Still, Caselli and Manning’s (2019) analysis suggests that most plausible scenarios involving technological change will result in benefits to most workers. Yet, historical data analyzed by Webb (2019) indicates that occupations that were highly exposed to previous automation technologies experienced large declines in employment and wages. This suggests that AI, which the author finds is directed at high-skill tasks, may lead to the long-term substitution of high-skilled workers in the future.

The theoretical work described above identifies how economists believe technology is affecting labor markets, usually after attempting to isolate technological effects from other factors. However, regardless of the effect technology has had on the labor market, readers may want a broader sense of long-term labor market trends, irrespective of the underlying causal mechanisms.

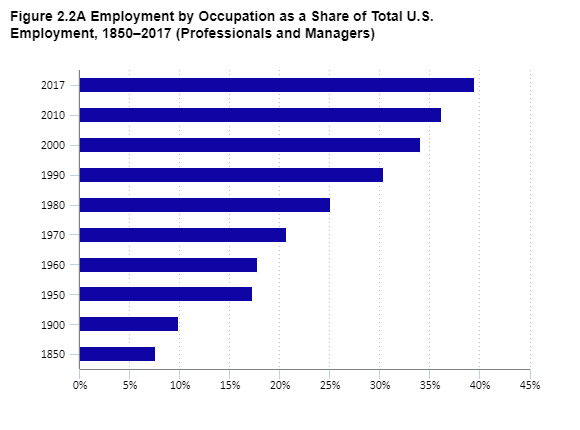

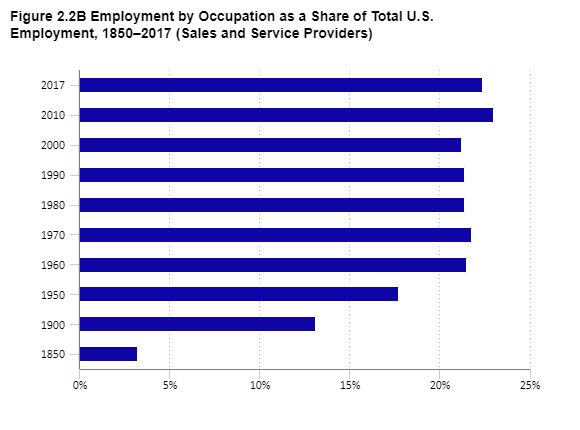

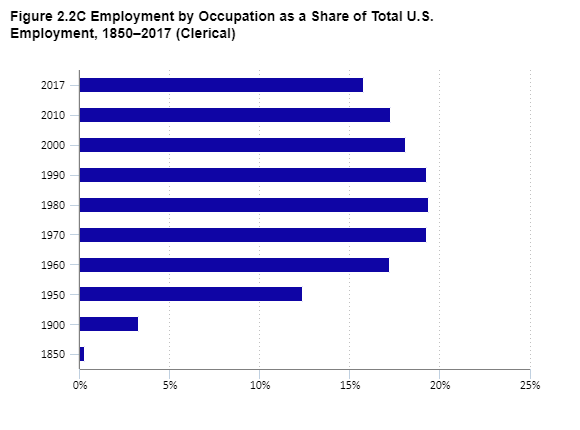

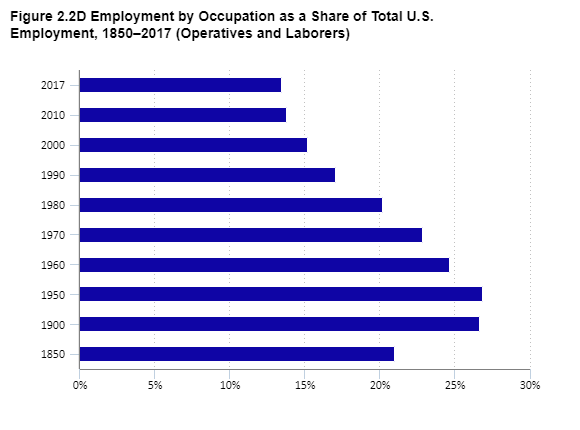

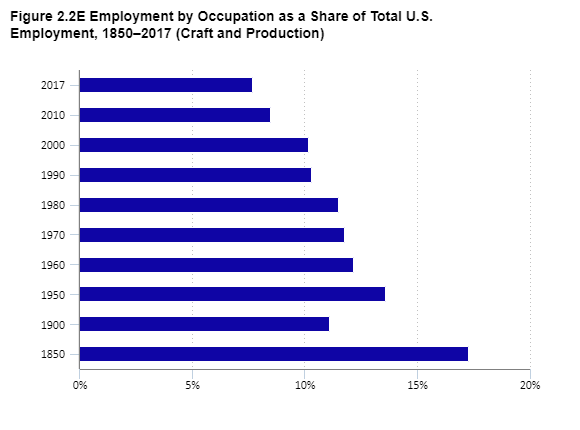

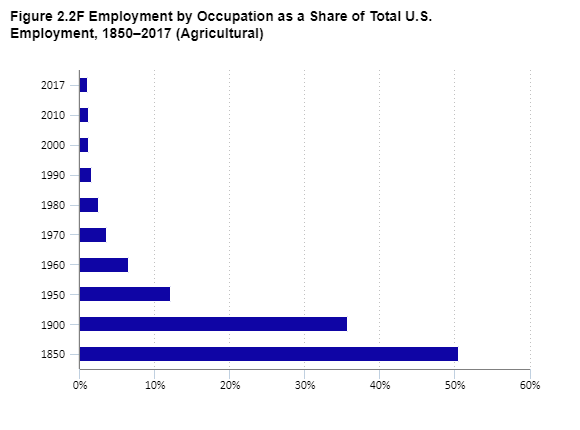

The Industrial Revolution and subsequent era of high productivity growth coincided with a major transformation of work in the U.S. In 1850, roughly half of workers were classified into farming or related agricultural occupations. By 1970, when Robert Gordon (2017) located the end of an economic revolution, the share of workers in farming occupations had fallen to just 4%. These data are shown in Figures 2.2A‑2.2F. Farming jobs were largely replaced with work in professional occupations, non-professional service occupations, and clerical services. Blue collar work peaked as a share of total employment around the middle of the 20th century and saw large losses—as a share of total employment—before the introduction of information technology. Since 1980, almost all of the net changes have been in professional services, with small gains from non-professional services. Consistent with the task-based framework of Acemoglu and Autor (2011), clerical occupations, which are typically classified as routine and automatable, peaked as a share of total employment in 1980 and have declined steadily with the spread of information technology. Professional service occupations, meanwhile, are classified as non-routine and cognitively demanding, and therefore most likely to be resistant to displacement by automation.

Economists at the Bureau of Labor Statistics have summarized related trends from 1910 to 2000 (Wyatt and Hecker 2006). In addition to the patterns described above, they noted the massive rise of jobs in healthcare occupations from 1910 (0.4 million jobs) to 2000 (9.1 million jobs), and the large-scale disappearance of private household jobs from 2.3 million in 1910 to 0.5 million in 1990. Similarly, Pilot (1999) documented the emergence of novel occupational categories, as well as the redescription, and disappearance of others from 1948 to 1998. Overall, 52 of the 209 occupational categories listed in 1948 were listed with the same detail in 1998 and an additional 78 with a change in detail or description of the category. Finally, 79 occupations were not listed in 1998 (including “adding machine servicemen” and “blacksmiths”). Analyzing data by industry, Fuchs (1980) described the broad pattern of transition away from agriculture toward manufacturing and services. He cited and largely agreed with earlier theoretical views that the rise of services follows from a higher income elasticity of demand for services relative to goods (meaning an extra dollar of income translates more readily into service demand rather than goods demand). Fuchs (1980) accepted this but indicated that the higher productivity of goods is also a factor. In Acemoglu and Restrepo’s (2019) framework, economic growth, and the higher productivity of goods-producing sectors create a productivity effect and a reinstatement of labor effect outside of the goods-producing industries.

Data for figures 2.2A through 2.2F

Source: Author analysis of IPUMS USA, occ1950 variable, which is a consistent classification of occupations across all census years. Those occupations were reclassified into the higher-level categories shown above by the authors.

Even as manufacturing employment has fallen in relative importance in the U.S., “advanced industries” have continued to provide a stable source of employment and a growing source of economic output. As defined by scholars at the Brookings Institution (Muro et al. 2015), advanced industries are defined by their high levels of investment in R&D and their propensity to employ workers in science, technology, engineering, or mathematical (STEM) occupations (Muro et al. 2015). These industries are responsible for the creation of most of the new technologies in the U.S., as measured by patents, as well as all commercial information technologies, since the industries that produce these technologies (e.g., computer manufacturing, software, information, telecommunications, and computer services) are explicitly included.

These 50 industries are found in the manufacturing, energy, and service sectors, but the service side has accounted for all the net employment growth while employment in advanced manufacturing has declined. Together, advanced industries accounted for 8.7% of total employment in 2015, with 60% of those in services. In fact, four service industries account for the largest share of employment and over one-quarter of the total: computer systems design; architecture and engineering; management, scientific, and technical consulting; and scientific research and development (Muro et al. 2015).

The educational requirements to work in these industries are relatively high, often STEM-focused, and increasing. In 1968, 76% of workers in advanced industries never attended college. By 2013, that share fell to 25%. At the same time, all of the job losses for those without college have been in the high school or less category. The share of advanced industry workers with some college or an associate degree has slightly increased in recent decades and stands at 25% (Muro et al. 2015). Along these lines, Rothwell (2013) finds that roughly half of workers who are in STEM-intensive occupations have less than a bachelor’s degree, but often some form of postsecondary training or education. Across all levels of education, Muro et al. (2015) document a substantial wage premium for workers in advanced industries, possibly as a result of the value of intellectual property in these industries.

Projecting forward, these results suggest that demand for tertiary education will continue to dominate employment opportunities in technology-producing industries. However, a sizable and perhaps even growing share of jobs will be available for those with technical certifications or other sub-bachelor’s level credentials, some of which could be provided through high school education (Krigman 2014).

More recent work from Muro et al. (2017) found that demand for workers with high levels of skill in digital technologies has increased considerably in recent years, and the share of all workers in these occupations increased from 4.8% in 2002 to 23% in 2016. Moreover, they found that even occupations with medium or low digitalization skill requirements became more digital intensive from 2002 to 2016, suggesting that the task content of occupations has shifted broadly toward the need for increased digital literacy. Along these lines, the U.S. Department of Education, the U.S. Bureau of Labor Statistics, and scholars all predict further growth in STEM jobs (U.S. Department of Education 2016; Fayer, Lacey, and Watson 2017; Wisskirchen et al. 2017).

Within technology-producing firms, there may also emerge a demand for entirely new professions. Specifically, new work will require professionals capable of training AI systems to perform intelligent tasks, such as teaching natural language processors and language translators, teaching customer service chatbots to mimic and detect the subtleties and complexities of human communication, and teaching AI systems (e.g., Siri and Alexa) to show compassion and to understand humor and sarcasm. There will also be a need for professionals who maintain and sustain AI systems to ensure that they are operating as intended and to address unintended consequences. In fact, this need exists today; currently, less than one‑third of companies are confident in the fairness and transparency of their AI systems and less than half are confident in the safety of their systems (Smith and Anderson 2017 and Wilson, Daugherty, and Morini‑Bianzino 2017). Also in demand will be workers who can bridge the gap between high-tech professionals, and the technologies they create, and businesspeople and consumers to help explain and provide clarity about AI systems. As implied by the European Union’s General Data Protection Regulation, companies will need data experts, such as Data Protection Officers, to protect privacy rights and related issues; in other words, companies will require professionals who can communicate technical details to non‑technical professionals and consumers (Wilson, Daugherty, and Morini-Bianzino 2017).

There is a dearth of comprehensive analyses relating the adoption of new technologies to the demand for labor overall and by skill level. At the macroeconomic scale, De Long and Summers (1991) have found that investment in equipment—which captures the technologies used in the production of goods and services—increases the growth rate of GDP. Those results could be thought of as general effects that go beyond the firms that create or use technology to the economy as a whole, but they do not directly relate to the labor market.

Whether at the macroeconomic or industry level, a principal methodological difficulty in measuring the causal effect of technology adoption stems from identifying whether technological adoption is a driving force of outcomes or a response to a complex and potentially unobservable set of temporal, country, industry, or firm characteristics. A few papers have made explicit attempts to overcome these challenges by using firm-level data.

In quasi-experimental research, Gaggl and Wright (2017) tested the causal effect of information technology investment on labor demand by taking advantage of a tax incentive offered by the United Kingdom government between 2000 and 2004, which allowed small businesses to deduct information technology investment expenses from their tax bills. Treatment effects were identified by using the eligibility cutoffs in a regression-discontinuity design. The causal effect of the investment was to significantly increase employment, wages, and productivity. A decomposition of the employment effect showed a modest decrease in routine cognitive workers (in administrative positions), a sharp increase in non-routine cognitive workers, and no change in manual workers.

Harrigan, Reshef, and Toubal (2016) also addressed concerns about causality in the adoption of technology. They used historical occupational data on the presence of “techies” within firms to predict future adoption of technology and identified the causal effect on job polarization and job growth. They defined “techies” as a set of workers in occupations that involve the installation, management, maintenance, and support of information and communications technology. These workers are mostly “in‑house” and not brought in as consultants; it is difficult for firms to scale up IT use without them. The result of this research is that IT use in France predicts skill upgrading—that is, a higher percentage of managerial and professional workers relative to lower-paid workers.

Cortes and Salvatori (2015) likewise used unusually detailed data at the firm level in the United Kingdom and found that the adoption of new technology, as reported by firm managers, was correlated with employment growth from 1998 to 2011, but did not predict a loss of routine work. They did not attempt to address concerns about the endogenous adoption of technology.

The papers described above are limited to non-industrial technologies. The results from papers using industrial machines are more mixed (Section 2.3.4), with some finding negative effects on labor demand and others finding no effect (Acemoglu and Restrepo 2017; Borjas and Freeman 2019; Graetz and Michaels 2018). The consensus among these papers, however, is that industrial machines have displaced lower-educated workers. Though a methodological limitation is each of those papers relies on country- or industry-level data, thus, the analysis may be biased by firm-level characteristics and difficult to relate to macroeconomic patterns that may affect firms differently for a variety of reasons.

Business survey evidence also suggests a positive relationship between labor demand and the adoption of new technology. Bughin et al. (2018) surveyed executives from large organizations and found that only 6% expect their workforce in the U.S. and Europe to shrink as a result of automation and AI. In fact, 17% expect their workforce to grow. Companies that see themselves as more extensive adopters of technology were somewhat more likely than those who described themselves as early adopters to project employment growth over the next three years. Qualitative evidence from industrial technology-using firms in the research of Kianian, Tavassoli, and Larsson (2015) also pointed to a positive relationship between labor demand and novel technologies.

Across a wide range of industries, technology also expands access to work through internet-enabled remote work, thus expanding the labor market and employment to those who may need flexibility or opportunities to work from home, work flexible hours, or engage in other alternative arrangements, such as opportunities for women, youth, older workers, and disabled workers (Millington 2017; World Bank Group 2016). Cramer and Krueger (2016) found that Uber’s mobile platform has increased the efficiency of the taxi service industry, thereby increasing the demand for drivers’ labor.

As defined above, automation is capital that replaces tasks performed by labor. As such, the adoption of automating technologies across the economy could affect the distribution of income between labor and capital. A change in national income in favor of capital would disproportionately reward individuals who earn income from investment and business ownership. A number of factors will moderate whether labor or capital rises as a share of national income in response to technological adoption: the absolute and relative price of capital compared to labor, how technologies affect labor productivity, the types of tasks performed by technology, and the adaptability of labor to perform new tasks.

Autor and Salomons (2018) used industry-level data across developed countries to estimate the effects of total factor productivity growth—which they liken to the adoption of new technologies—on employment and the labor share of income. They found that the direct effect of total factor productivity growth was a decrease in employment in the industries experiencing productivity growth (a direct effect), but an increase in other industries through an indirect effect. Moreover, they found that productivity growth has coincided with a reduction in the labor share of income in industries experiencing productivity growth.

Autor and Salomons’ (2018) finding that highly productive industries have experienced a declining labor share of income is consistent with Elsby, Hobijn, and Şahin’s (2014) review of the labor share literature, in that they found changes within industries—particularly a declining share of income going to labor within manufacturing—explained most of the overall decline. Yet, Elsby, Hobijn, and Şahin (2014) rejected the hypothesis that the substitution of capital for labor explains the fall in the labor share, which they argued does not match the trends that theory would predict. Instead, they tentatively suggested that offshoring has played a significant role, rather than technology.

One limitation of Autor and Salomons’ (2018) method, as it applies to understanding technology, is that total factor productivity growth can come through channels that are not tied to technology. The quality of human capital, access to international markets, industrial organization, the degree of misallocation, and the institutional context all affect measured total factor productivity growth but may be related to actual technology in complex ways (Jones 2016). On the other hand, an encouraging result of Autor and Salomons (2018) that goes beyond this limitation is that productivity growth—whether it comes from technology or other sources—has not coincided with net losses in employment.

Using a different accounting-based approach, Eden and Gaggl (2018) estimated the information and communications technologies replaced a large number of routine workers (which they defined as the following occupations: sales, office, clerical, administration, production, transportation, construction, and installation, maintenance, and repair) with capital equipment. They also found that information technology rebalanced the labor share of national income in the U.S. toward capital and workers in non‑routine occupations.

A strand of the literature acknowledges that the Industrial Revolution was generally beneficial to workers and living standards. Workers employed in agriculture were able to move into other sectors of the economy as agricultural production and food processing became more mechanized and efficient.

Yet, this history is not guaranteed to repeat itself, especially if newer technologies have fundamentally different characteristics. For example, the power of automation to perform complex cognitive tasks—via AI—distinguishes it from automated technologies of the Industrial Revolution. Likewise, digital technologies can almost instantly transmit data anywhere in the world from any place, which is not something that pre‑digital technologies could do. Possible implications involve a reduction of demand for human monitoring and control activities. Along these lines, recently, economists classified driving an automobile to be impervious to automation (Autor, Levy, and Murnane 2003), but self-driving cars are already a reality only a decade and a half later.

Motivated by these and related concerns, economists have made various attempts to study how the adoption of current or future technologies could affect the labor market. In a widely cited paper, Frey and Osborne (2017) provided a review of recent advances in machine learning, AI, and related technologies that collectively are performing both cognitive and manual tasks that would have been difficult to anticipate even a decade ago. In their economic model, they relaxed the literature’s constraint that technology is limited to routine tasks and considered three engineering bottlenecks (perception, creative intelligence, and social intelligence) as significantly and practically limiting automation.

In attempting an empirical analysis of these concepts, Frey and Osborne (2017) confronted obvious measurement challenges. Their solution was to combine a subjective assessment with an objective source of information on the task content of occupations (from O*NET) and the level of skill required by the occupations, with respect to the three bottlenecks. The subjective evaluation consisted of expert categorization of a subset of occupations (70 of 702) by participants in a machine learning conference at Oxford University. Each participant was asked to rate an occupation as automatable based on the answer to this question:

“Can the tasks of this job be sufficiently specified, conditional on the availability of big data, to be performed by state-of-the-art computer-controlled equipment?” (Ibid, 30.)

The binary answers to these questions were then modeled as a function of the O*NET-based scores on the bottlenecks. The best-fitting models were then used to calculate an automatable score for all 702 occupations, using the features of jobs that best predicted automation as assessed by the experts. They classified occupations as high-risk if the estimated probability of automation is 70% or higher and low-risk if it is under 30%. This exercise led to the conclusion that 47% of U.S. jobs are at high risk of automation within the next two decades. They found that many jobs in office and administrative support, transportation, and services are at risk, despite the latter not typically being considered routine. Additionally, Webb (2019) finds that AI, in contrast with previous new technologies like software and robots, is directed at high-skill tasks. This research suggests that highly skilled workers may be displaced at a higher rate given the current rate of adoption of AI.

Frey and Osborne (2017) acknowledged that this estimate is not a prediction about the percentage of jobs that will actually be automated, because they explicitly did not model the relative costs of capital versus labor, nor did they consider that technology might partially automate a job. A further limitation is that they did not consider the research and development costs of these potential applications. Thus, as others have pointed out, their result was not a measure of what is economically feasible, so much as an estimate of what is technologically feasible (Arntz, Gregory, and Zierahn 2016).

Two papers from OECD economists have attempted to refine Frey and Osborne’s (2017) estimates and apply them to a larger group of developed countries.

Arntz, Gregory, and Zierahn (2016) used Frey and Osborne’s (2017) occupational results as their main dependent variable and calculated the probability of automation based on the underlying characteristics of the worker and his or her job. Crucially, they allowed job tasks within the same occupational category to vary and have independent effects on the probability of automation, using data from the OECD Program for the International Assessment of Adult Competencies (PIAAC) exam. This approach acknowledged two important things: occupations contain multiple tasks, and even within the same occupation, workers do not perform exactly the same functions at the same level of complexity. Their results showed that jobs that involve more complex tasks are less automatable, especially those involving tasks such as influencing, reading, writing, and computer programming. Moreover, human capital—measured by education level, experience, and cognitive ability—lowers the risk of working in an occupation deemed automatable by Frey and Osborne (2017).

Their final estimate, which they cautioned likely overstates the actual likelihood of automation, predicts that only 9% of workers in the U.S., and in the average OECD country, face a high risk of losing their job to automation within an unspecified number of years—estimated by Frey and Osborne (2017) to be roughly 10 to 20. This is likely to be an overestimate because they did not consider, as the authors pointed out, the slow pace of technological adoption, nor the economic incentives for companies to produce or adopt the technology.

Nedelkoska and Quintini (2018) followed the above methods closely—but allowed tasks to vary based on classification of tasks into Frey and Osborne’s (2017) bottlenecks—instead of the more general set of occupational characteristics used by Arntz, Gregory, and Zierahn (2016). Nedelkoska and Quintini’s (2018) estimate was nonetheless close to the latter’s in that they found 14% of jobs are at risk of automation across 32 countries. They emphasized, however, that roughly half of the jobs across OECD countries could be affected by automation, as some aspect of the job is likely to be changed.

Other relevant research in this area looks at how the skills of occupations have changed in relationship to technology. After analyzing changes in the important job tasks across occupations from 2006 to 2014, MacCrory, Westerman, Alhammadi, and Brynjolfsson (2014) concluded that skills that complement technology (e.g. familiarity with equipment) are increasing along with skills that do not currently compete with machines (e.g. interpersonal). On the other hand, skills that do compete with machines (e.g. manual and perception) are declining, in that workers were less likely to report these skills as important in 2014 relative to 2006 within the same occupation group. The upshot of this analysis is that job tasks are gradually drifting away from work that competes with machines, and these are more likely to be jobs that require less formal education.

After reviewing the latest trends in technology across various industries, Ford (2015) predicted that technology will create massive social disruption, but he also concluded that new technologies “will primarily threaten lower-wage jobs that require modest levels of education and training” (p.26). He was, however, skeptical about the possibility of absorbing millions of displaced workers into high-wage jobs, which he believed will also face competition from machines that are increasingly able to perform cognitive tasks like writing, data analysis, and problem solving.

Economists have wrestled with the implications of technological change from the beginning of the Industrial Revolution to the present, and yet no theoretical consensus has emerged as to what effects new technologies will have on the labor market.

One of the key constructs in the literature relates to the classification of work into theoretically tractable categories. The early literature limited this to skilled and unskilled labor, which proved too simplistic, as middle-skilled jobs in manufacturing and clerical work experienced lower demand than workers in low‑skilled service occupations. The task framework helped fill this gap, by explaining the hollowing out of certain “middle-skilled” occupations, as a result of technological displacement. However, this framework is inadequate for explaining the demand for low-skilled jobs. As Manning (2004) argued, demand for low‑paying service jobs can be thought of as stemming from local demand from highly paid workers, adding considerable nuance to the skill-biased technological change framework. If technology raises productivity disproportionately for professional workers, labor demand for lower-paying service jobs may be an inevitable result. Along these lines, Goos, Konings, and Rademakers (2016) also found evidence that STEM jobs create spillovers for non-STEM jobs. Acemoglu and Restrepo’s (2019) reinstatement and productivity effects capture some of these potential channels, but additional theoretical and empirical work would be fruitful.

Further refinements of the theory led to the classification of work based on combinations of cognitive, manual, routine, and non-routine task performance. More recently still, the recognition of advances in AI and related technologies has led to the suggestion that perception, creativity, and social intelligence are important cross-cutting characteristics of tasks, and recent work by OECD economists point to specific job tasks that may or may not fit into those categories (Arntz, Gregory, and Zierahn 2016).