An official website of the United States government

United States Department of Labor

United States Department of Labor

Crossref 0

Disentangling Rent Index Differences: Data, Methods, and Scope, Disentangling Rent Index Differences: Data, Methods, and Scope, 2022.

Fictions of the market: The shelter component of the consumer price index in theory and practice, Human Geography, 2025.

Disentangling Rent Index Differences: Data, Methods, and Scope, Disentangling Rent Index Differences: Data, Methods, and Scope, 2023.

The Consumer Price Index (CPI) program updates its sample of geographic areas on the basis of the most recent decennial census, to ensure that the sample accurately reflects shifts in the U.S. population. This article describes CPI’s latest area-sample redesign, which will be used with the introduction of 2018 price indexes.

Update: September 14, 2017

The new area design implementation plan to introduce new primary sampling units (PSUs) in four waves over a 4-year period beginning in January 2018 has been modified as follows:

In addition, BLS will continue to publish monthly region-size class indexes for A- and B/C-sized cities in the four census regions.

The CPI sample-design process involves multiple stages. In the first stage, a sample of geographic areas is selected. In subsequent stages, a sample of outlets in which area residents make retail purchases, a sample of specific retail goods and services, and a sample of residential housing units are selected. While these latter samples are rotated on a regular basis, the geographic sample has traditionally been rotated once every 10 years. The 2018 area revision will mark the geographic sample’s first rotation since 1998.1

Historically, a new area sample had been selected and implemented after each decennial census. This selection was done jointly with the Consumer Expenditure Survey (CE) program, from which CPI obtains expenditure weights for price indexes and selection probabilities for goods and services. Because all new geographic areas were rotated into the CPI all at once, field collection had to continue in old sampling areas while survey operations were starting up in new areas. This practice typically caused a spike in field collection costs, which had to be covered through a special funding initiative. Effective with the 2018 geographic redesign, CPI will rotate its sample to new geographic areas on a continuous basis, over a period of consecutive years, until all new areas have been brought into the sample.2 This approach is expected to be more cost effective.

In general, the process used to select the 2018 area sample is similar to the process used in the 1998 area revision.3 The basic steps in both cases include the following:

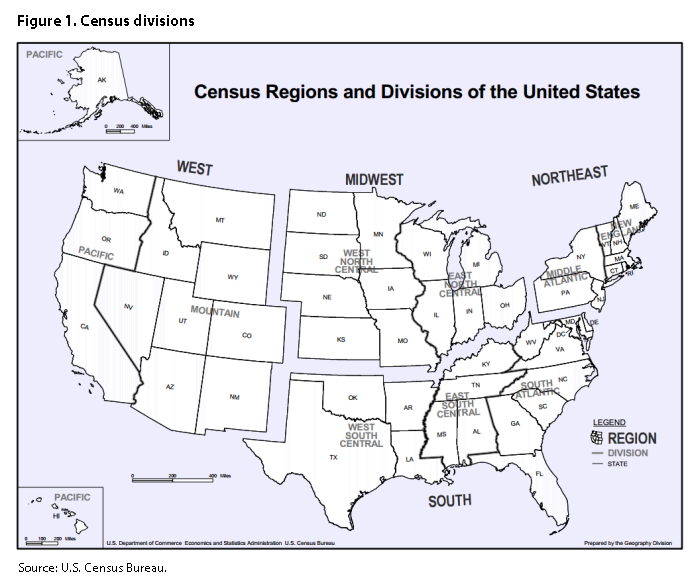

Despite these similarities, the new process has introduced some notable methodological changes within each of the basic steps. First, the sample classification structure has been changed. The 1998 design classified areas into four Census regions by two size classes for a total of eight groups; the 2018 design classifies these areas into nine Census divisions.5 Second, the area definitions of PSUs have been updated to reflect the most recent Office of Management and Budget’s (OMB) area definitions.6 Third, in the new design, the number of sampled PSUs in the CPI has been reduced from 87 to 75. Finally, changes were made to the stratification variables and the sampling process for selecting PSUs. The purpose of this article is to provide a more detailed explanation of the aforementioned methodological changes in the 2018 area revision and to describe the plan for rotating PSUs to the new area sample over a 4-year transition period.

In the CPI, geographic sample variables represent one dimension of the overall index classification structure. In the current area design, the urban portion of the United States is divided into 38 geographic areas, called index areas. In addition, the set of all goods and services purchased by consumers is divided into 211 categories, called item strata. Combining these two dimensions results in 8,018 (38 × 211)

In the 1998 sample design, areas were first classified by location, on the basis of one of four Census regions: Northeast, Midwest, South, and West. Then, each area was classified into one of three population-size classes: self-representing areas (A-size), medium nonself-representing areas (B-size), and small nonself-representing areas (C-size).9 In the 2018 sample design, areas were first classified by location, into one of nine Census divisions: New England, Middle Atlantic, East North Central, West North Central, South Atlantic, East South Central, West South Central, Mountain, and Pacific. The Census divisions represent a further breakdown of Census regions. (See figure 1.) In addition, each area was classified into one of two population-size classes—self-representing or nonself-representing—with the use of the size cutoff described later in the article, in the section on determining the number of sampled PSUs.

The main impetus for using the nine Census divisions instead of the Census regions and size classes from the 1998 sample design was to create and support indexes that are more locally defined. In order to maintain approximately the same number of classification groups for nonself-representing areas, CPI combined the B- and C-size classes. The proportion of medium- and small-size areas within a Census division was determined through a process called controlled selection (see section on sample selection). A BLS study found that the use of by-division indexes had little effect on estimates for the U.S. all-items index, regional CPI indexes, and 12-month standard errors.10

Given the change in classification for the nonself-representing areas (from Census region and size class to Census division), the only way to reduce the number of index areas was to decrease the existing number (31) of self-representing areas.11

After each decennial census, OMB releases a new set of definitions for statistical areas. The current definitions assign counties surrounding an urban core area to geographic entities called Core-Based Statistical Areas (CBSAs). The assignment is based on each county’s degree of economic and social integration (as measured by commuting patterns) to the urban core. There are two types of CBSAs: metropolitan and micropolitan. A metropolitan CBSA has an urban core of more than 50,000 people, and a micropolitan CBSA has an urban core of 10,000 to 50,000 people. CBSAs may cross state borders. In addition, OMB defines Combined Statistical Areas (CSAs), which are combinations of two or more CBSAs.

In the 1998 area sample design, the CPI program distinguished among A-, B-, and C-sized PSUs. The B-sized PSUs were Metropolitan Statistical Areas (MSAs), defined by OMB in 1993; the C-sized PSUs were urban parts of non-MSA areas; and the A-sized PSUs were an MSA mixture, in which some MSAs were combined to maintain continuity with area definitions from the 1987 CPI geographic revision. Because CBSAs are the conceptual successor of earlier metropolitan area definitions, using the metropolitan and micropolitan CBSA definitions for nonself-representing areas was a natural choice. However, there was a question whether the CSA definitions should be used for self-representing PSUs. In some cases, the A-sized PSUs in the 1998 sample more closely resembled CSA definitions; in other cases, they more closely resembled the new metropolitan CBSA definitions. The problem with CSA definitions is that they often create a very large geographic area in which CPI has to conduct field operations and collect prices. For this reason, CPI decided to strictly adhere to the new metropolitan CBSA definitions for self-representing PSUs.

Currently, BLS publishes the CPI-U, which covers approximately 87 percent of the U.S. population. With the introduction of the CBSA concept to the CPI, the CPI-U coverage will increase to 94 percent of the U.S. population reflected in the 2010 census.12 The area sample frame will comprise 381 metropolitan CBSAs, representing approximately 85 percent of the population, and 536 micropolitan CBSAs, representing approximately 9 percent of the population.

For the area sample, CPI has traditionally selected one PSU per stratum. The number of strata determines the total number of PSUs in the sample. Specifying the number of strata depends on a variety of factors, including that number’s expected overall impact on the accuracy of the U.S. all-items CPI and the total budget available for data collection. With respect to accuracy, special consideration is given to the expected impact on sampling variance and the expected impact on bias.

Currently, the CPI has 87 urban PSUs, whereas the CE has 91 PSUs (75 urban and 16 rural).13 With the 2018 area revision, the CPI program will reduce the total number of PSUs in the CPI, to 75. This reduction will eventually allow CPI to collect prices in the same set of PSUs as that used by the CE program to collect expenditure information.14 In addition, the reduction will lower the overall cost of implementing the new sample, because of an expected increase in the percentage of overlapping areas and a decrease in the number of new areas. Most importantly, the change will increase the average number of price quotes per index area and, therefore, help address the small sample bias created by basic item–area indexes. Of course, maintaining the same number of total quotes in the CPI and reducing the number of PSUs would increase the standard error of CPI estimates, because of the loss of information in the PSU component of variance. However, using variance models, CPI estimated that the 6-month standard error of the U.S. all-items CPI would see a modest increase of 2 to 5 percent.15 These estimates were based on the assumption that the total number of quotes in the CPI would be maintained and that the number of self-representing PSUs would be reduced from 31 to 23.

To determine the ideal number of self-representing PSUs in the new area sample with 75 PSUs, the CPI program again used variance models to simulate 6-month variance estimates in the U.S. all-items CPI for different population-size cutoffs. The simulation showed that, for any population cutoff between 2.0 and 3.0 million, the range of the modeled 6-month standard errors was extremely narrow. (The largest simulated difference was around 1 percent, which is the difference between, say, a standard error of .0657 and .0650.) This narrow range gave CPI some flexibility in determining the exact population cutoff. The cutoff was ultimately set at 2.5 million, which resulted in 23 self-representing PSUs. These PSUs include 21 units whose population is greater than 2.5 million and 2 additional units—Anchorage, AK, and Honolulu, HI. Anchorage represents all CBSAs in Alaska, and Honolulu represents all CBSAs in Hawaii. These CBSAs are unique because the locations of both states make price change in their markets geographically isolated from that in other markets. For this reason, the CBSAs in Alaska and Hawaii are treated as separate geographic strata.

With 23 self-representing PSUs and nine Census divisions, the new area design will yield 6,752 basic indexes (32 index areas by 211 item strata) for the U.S. all-items CPI. This reduction (approximately 16 percent) in the number of basic indexes will help address the small sample bias in index estimates.

The goal of area stratification is to reduce the overall sampling variance in the CPI. This is achieved by grouping nonself-representing PSUs whose characteristics are similar and highly correlated with price change and consumption behavior. In the 1998 sample design, four independent variables were used for stratifying the nonself-representing PSUs: normalized (centered and scaled by the range) longitude, the square of normalized longitude, normalized latitude, and percent urban. Instead of simply repeating the stratification from the previous area revision, CPI reanalyzed the stratification process from scratch. To determine the best possible area stratification, the effort involved not only the reassessment of geographic variables, such as latitude and longitude, but also an analysis of potential demographic variables. The decision to investigate demographic variables (besides percent urban) was highly influenced by the introduction of the American Community Survey (ACS), which replaced the decennial census long-form survey.16 The ACS introduced rolling 3-year estimates that would cover every community with a population greater than 20,000 and 5-year estimates that would cover every community in the nation.17 Previously, the decennial census provided only a snapshot of the demographic variables.

The ACS presented 52 main topics of social characteristics in its 3-year estimates for 2005–07. It would have been difficult to investigate all of these variables when modeling CPI percent-change estimates. Therefore, the first task was to limit the set of ACS variables to a manageable list, and the second task was to aggregate these statistics to the CBSA level. This approach produced 23 variables that were used in the first stage of modeling.

The first task involved eliminating variables that were thought to have little explanatory power in the CPI. The guiding philosophy was to take ACS variables—such as race, educational attainment, and property statistics—that could affect price change. Housing variables were selected because indexes for shelter make up a large proportion of the CPI. The second task involved aggregating the county-level ACS data to the CBSA level. If median statistics for all member counties were available, they were averaged. Examples of such averages include the average median property value and the average median income for a CBSA. Other CBSA statistics, expressed as an average or a percentage, were calculated with the use of a weighted average based on the requisite county’s population. These calculations yielded statistics such as the median household property value and the percentage of the CBSA population that is Native American.

The final list of housing and demographic variables considered for potential inclusion in the stratification model was as follows:

To model these stratification variables, CPI developed a series of nonoverlapping all-items price relatives19 for each PSU in the current area sample, using the same timeframe as that for the ACS demographics. The ACS variables investigated spanned the period 2005–07 and were released in December 2008. Unofficial price relatives for B- and C-sized PSUs were produced to serve as responses in the modeling procedure and used in conjunction with the existing price relatives for A-sized PSUs. The 12-month (December to December) price relatives used were for 2004–05, 2005–06, 2006–07, and

A backward-elimination process was used to limit the set of variables to those with small p-values

| Effect | Numerator DF | Denominator DF | F-value | p-value |

|---|---|---|---|---|

| Period | 1 | 81 | 173.42 | < .0001 |

| Census region(1) | 3 | 81 | 10.27 | < .0001 |

| Number of households | 1 | 81 | 12.51 | .0007 |

| Household property value | 1 | 81 | 11.51 | .0011 |

| Notes: (1) Census region was used in lieu of Census division for two reasons. First, the current sample design was not intended to support division estimates. Second, the determination of the stratification model was completed before it was decided to implement the Census divisions. Source: U.S. Bureau of Labor Statistics. | ||||

To determine the predictive power of a particular model, CPI used linear regression to examine each 1-year all-items price relative in the 2005–08 timespan. The first linear model used the 1-year price relative for December 2004 to December 2005 as the dependent variable, the second used the 1-year price relative for January 2005 to January 2006, the third for February 2005 to February 2006, and so forth, until every 1-year price relative was used. These linear models produced a distribution of R-squared statistics. The mean of this distribution was calculated to describe the predictive power of each model. This process was repeated for lower level 1-year price indexes for energy, local services, food and beverages, and local shelter. The backward-elimination process, described earlier, was implemented for each of these subindexes. The R-squared statistic was again calculated to determine the predictive quality of the final set of variables for each subindex. However, none of these variables proved to be highly predictive, as they all had R-squared statistics smaller than 0.3. Models also were evaluated for each of the four Census regions, again with little success. In addition to the variables from the ACS, the following stratification variables from the previous area revision were considered for each PSU in the current sample: longitude, latitude, longitude squared, latitude squared, and percent urban. However, these variables also had very little predictive power and were not always significant in the all-items model.

Once several final models were derived, the PSUs included in these models were stratified with an “equal population” constraint, under which each stratum would have a population within 10 percent of the mean of all strata in the index area. Because this constraint conflicted with the variable for number of households, which is highly correlated with population, that variable was excluded from the models.

In addition, since the area sample design is also intended to support the CE, ACS variables were investigated for correlation with expenditure estimates. Average median household income and average median property value were, by far, the best demographic predictors of consumer expenditures (the two-variable model investigated for the 2005–08 timeframe had an R-squared statistic of 0.65).

Finally, three separate stratification models were investigated: a seven-variable model, a six-variable model, and a four-variable model. The seven-variable model included percent urban, income, property value, longitude, latitude, longitude squared, and latitude squared. The six-variable model contained the same variables, except for percent urban, and the four-variable model excluded longitude squared, latitude squared, and percent urban.

Ultimately, the four-variable model (longitude, latitude, median property value, and median household income) was selected, because longitude squared and latitude squared added little predictive value. None of the four variables in the model turned out to be an influential predictor of CPI price change over time and across regions. Table 2 shows the model’s predictive accuracy (R-squared) over time for each Census region and subcategory investigated.

| Index | Total | Census region | |||

|---|---|---|---|---|---|

| Northeast | Midwest | South | West | ||

| All items | .158 | .311 | .224 | .160 | .330 |

| Energy | .216 | .529 | .162 | .261 | .483 |

| Food and beverages | .164 | .338 | .174 | .153 | .392 |

| Housing | .276 | .469 | .206 | .236 | .407 |

| Local services | .076 | .201 | .098 | .207 | .173 |

| Source: U.S. Bureau of Labor Statistics. | |||||

The final stratification model of longitude, latitude, median property value, and median household income is a compromise between having significant predictors for the CE and retaining geographic variables used in the previous area design. The addition of the income and property-value variables will greatly enhance the area stratification for the CE and substantially reduce the between-PSU variance. It will not, however, significantly increase the explanatory power of the model for price change. Despite this, producing more reliable consumer expenditure estimates will help in the calculation of the CPI. Because the new stratification model is very different from its predecessor, a sample-overlap procedure will be used to retain as many nonself-representing PSUs from the 1998 area sample as possible.

To allocate sample to the nonself-representing PSUs, CPI excluded the population for the self-representing PSUs for each Census division. Table 3 presents the proportional-to-population-size sample allocation, by Census division, for the 2018 geographic area design. There are 23 self-representing PSUs, which account for approximately 39 percent of the total U.S. population and about 42 percent of the CPI-U population. There are 52 nonself-representing PSUs, which represent the remaining 58 percent of the CPI-U population and include both metropolitan and micropolitan areas.

| Census division | Nonself-representing PSUs | Self-representing PSUs | Total |

|---|---|---|---|

| Total | 52 | 23 | 75 |

| 1—Northeast | 2 | 1 | 3 |

| 2—Middle Atlantic | 4 | 2 | 6 |

| 3—East North Central | 8 | 2 | 10 |

| 4—West North Central | 4 | 2 | 6 |

| 5—South Atlantic | 12 | 5 | 17 |

| 6—East South Central | 6 | 0 | 6 |

| 7—West South Central | 8 | 2 | 10 |

| 8—Mountain | 4 | 6 | 10 |

| 9—Pacific | 4 | 7 | 11 |

| Source: U.S. Bureau of Labor Statistics. | |||

The next phase of the selection process was to assign the nonself-representing PSUs within each Census division to strata based on a model of the four stratification variables (latitude, longitude, median household income, and median property value).20 The primary objective of the PSU stratification was to minimize the between-PSU component of variance by making the PSUs within each stratum as homogeneous as possible with respect to the four stratification variables. In addition, to further minimize the variance, strata within each Census division had to be kept with approximately the same population. In the 1998 design, this type of constrained clustering problem was solved with a Friedman-Rubin hill-climbing algorithm.21 For the 2018 design, CPI developed a new heuristic stratification algorithm based on k-means clustering and zero–one integer linear programming.22

The final step of the selection process was to select one PSU per stratum. However, before making that final selection, CPI had to employ two special selection procedures: a sample-overlap procedure and a controlled-selection procedure.

In the 1998 design, a sample-overlap procedure was used to select the nonself-representing areas for the CPI and CE surveys.23 Sample-overlap procedures increase the expected number of nonself-representing geographic areas that would be reselected in the new design. Because the use of an overlap procedure results in fewer new areas that need to be rotated in the sample and in fewer existing areas that need to be rotated out of the sample, it lowers the expected costs of operational changes (e.g., hiring and training of new field staff) associated with the new area design.

Two different sample-overlap procedures were considered: one proposed by Walter Perkins and one by Lawrence Ernst.24 The Perkins procedure is a heuristic method that was used in previous redesigns. The Ernst procedure uses linear programming. Because linear programming is employed in optimization, using the Ernst procedure would result in a higher expected number of overlapping PSUs and, consequently, lower overall cost of switching to the new area design for both the CPI and the CE. Only nonself-representing metropolitan PSUs were deemed eligible for the procedure. Micropolitan areas were deemed ineligible because they did not have enough renters for the CPI Housing Survey. Each micropolitan area must have enough renters for two samples (of six panels each) during the decade between area redesigns. In the past, the CPI program sometimes had to extend the area definition for the CPI Housing Survey, to include outlying rural counties and, thus, ensure that the survey had enough renters. Table 4 allows a comparison between the expected number of overlapping PSUs calculated with the two procedures and the expected number of nonself-representing areas selected independently.

| Census division | PSU design | Independent selection | Sample-overlap procedure | |

|---|---|---|---|---|

| Perkins | Ernst | |||

| Total | 58 | 13.2 | 19.3 | 28.6 |

| 1—Northeast | 2 | .5 | .7 | 1.0 |

| 2—Middle Atlantic | 4 | .7 | 1.2 | 1.7 |

| 3—East North Central | 8 | 2.9 | 3.6 | 4.8 |

| 4—West North Central | 4 | .8 | 1.4 | 2.1 |

| 5—South Atlantic | 14 | 3.2 | 4.5 | 7.0 |

| 6—East South Central | 6 | .8 | 1.1 | 2.2 |

| 7—West South Central | 8 | 2.1 | 2.6 | 4.4 |

| 8—Mountain | 6 | 1.4 | 2.6 | 3.2 |

| 9—Pacific | 6 | .9 | 1.6 | 2.2 |

| Notes: (1) This analysis was done for a total of 87 PSUs (58 nonself-representing) before it was decided to move to the final design of 75 PSUs (52 nonself-representing). Source: U.S. Bureau of Labor Statistics. | ||||

Ultimately, the Ernst procedure was selected and used, because it increased the overlap between the PSUs in the new area sample and those in the 1998 sample. The outcome of the procedure gave a new set of selection probabilities, which were used to select the sample.

Controlled selection is a process of selecting a random sample of PSUs such that the probability of selecting certain preferred combinations of PSUs increases and the probability of selecting nonpreferred combinations of PSUs decreases. This is accomplished by controlling the interaction among the PSU selections in different strata. Given that only one sample is ultimately selected, there may be important reasons for preferring some possible sample outcomes over others. It is usually judged that balancing the sample with respect to one or more additional variables (besides those from the strata) would increase the degree of confidence in the inferences made about the population. If information on such additional variables is available, then the population may be crossclassified and an implicit stratification achieved with reference to each of these additional variables. However, multiple crossclassifications can be overly restrictive, making it impossible to select a sample that meets all of the constraints.

Controlled selection can be used to control for a variety of variables. In the 1998 design, the number of PSUs per state and the number of PSU overlaps were controlled. For example, if Florida, given its population share in the South, was expecting 2.3 PSUs from that region, then controlled selection gave a 30-percent chance of the state getting 3 PSUs and a 70-percent chance of it getting 2 PSUs. In this case, controlled selection eliminated the possibility of selecting samples with fewer than two PSUs or more than three PSUs.

For the 2018 redesign, the greatest concern was about controlling the number of micropolitan areas selected in the sample; controlling by state was deemed a secondary concern. The change in classification for the nonself-representing index areas (from Census region and size class to Census division) meant that controlling the number of PSUs per state would be of less value.

Controlled selection is a computationally intensive process. The solution time for a controlled-selection problem increases exponentially with the size of the problem (e.g., the number of strata). Using the software package SOCSLP, CPI was unable to solve a two-variable controlled-selection problem for the South region.25 Therefore, only micropolitan status was used as a control variable, and this was done at the Census-region level.

After adjusting the sample selection probabilities with the use of the Ernst sample-overlap procedure and employing controlled selection for the micropolitan areas, CPI randomly selected one PSU per stratum. The resulting (final) area sample for the 2018 revision is shown in the appendix. Thirty-three of the 87 PSUs in the 1998 design will be dropped from the CPI. Two of these exclusions are due to treating the New York, NY, CBSA as one PSU; previously, that CBSA was treated as three PSUs. Meanwhile, only 21 of the 75 PSUs in the 2018 design will be considered new areas. Of the 21 new areas, 14 are metropolitan CBSAs and 7 are micropolitan CBSAs.

In January 2018, CPI will begin publishing indexes for the nine Census divisions, in addition to releasing the current four regional estimates. However, given the new area design, CPI will no longer publish “region by city size” index estimates. Because of the reduction in the number of self-representing areas, the program will be unable to support separate index estimates for Cincinnati, OH; Cleveland, OH; Milwaukee, WI; Pittsburgh, PA; and Portland, OR.26 One other formerly published area, Kansas City, MO, was not reselected as part of the 2018 area revision. The remaining 23 self-representing areas listed in the appendix (denoted by an “S” in the PSU code) will continue to have area indexes published under the new area design.

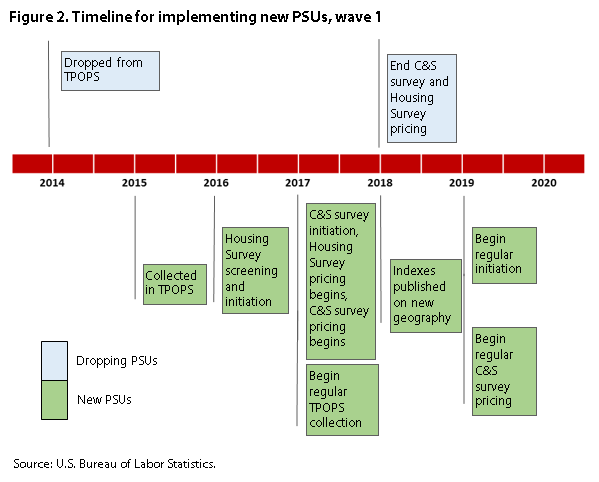

After selecting the final area design, BLS determined the process for implementing the new geographic sample into the four surveys used to construct the CPI. The four surveys are the CE, the Telephone Point-of-Purchase Survey (TPOPS), the Commodity and Services (C&S) survey, and the Housing Survey. In all previous CPI geographic revisions, the conversion process occurred all at once: that is, the administration of each survey switched from the old area sample to the new area sample in its entirety, albeit at different points in time. For example, for the 1998 revision, the CE was switched to the new sample design in 1996; TPOPS was used to identify outlet frames in new PSUs during the 1995–96 period; and the initial round of data collection for the Housing and C&S surveys was completed by the fall of 1997, so that the CPI could be computed by January 1998, on the basis of the new area design.

For the 2018 area revision, the CE fully converted to the new sample in 2015. However, for the other three surveys (which are directly managed by the CPI program), the 21 new PSUs have been divided into groups whereby the new PSUs will be introduced over a 4-year span. This rotation process will distribute the cost of introducing new PSUs into the Housing and C&S surveys, avoiding a spike in data collection costs before the full CPI conversion to the new area design.

The calculation of price indexes under the new area design will begin in January 2018, with the introduction of the first set of new PSUs into the sample. All late-dropping PSUs (i.e., existing PSUs scheduled to be rotated out of the sample late in the implementation process) will be used as proxy candidates for late-rotating new PSUs, until the complete set of new PSUs has been rotated into the sample. An ideal proxy for a given new PSU was considered to be one of the dropping PSUs within a new PSU geographic stratum. If such dropping PSU were not available, a proxy was identified through nearest neighbor rules, with the constraint that the proxy falls within 200 miles of the new PSU. If no eligible proxy existed, the new PSU was considered to be a “geographic hole” within the new area structure. There were eight new PSUs with no eligible proxy. Therefore, they were given priority in the rotation schedule.

In devising the rotation schedule, CPI determined the following field operational constraints: (1) no more than six new PSUs could be rotated in a calendar year, and (2) no more than two new PSUs could be rotated in any of the six BLS regional offices in a calendar year. Because there are 21 new PSUs, the new PSUs will be rotated across four groups, or waves, over a 4-year period. Six new PSUs will be introduced in each of the first three waves, and three new PSUs will be introduced in the fourth, and final, wave. Figure 2 shows the timeline for introducing wave-1 PSUs into the various components of the CPI. The milestones for each successive wave begin exactly 1 year after the corresponding milestones for the previous wave have been completed; according to this schedule, all waves will be completed by the end of 2023. Because the publication of indexes based on the new area design will begin in January 2018, the new PSUs for waves 2–4 will be “proxied” by a dropping PSU. All dropping PSUs that were not designated as proxies (18 PSUs) will be dropped with wave 1. Three new PSUs are considered geographic holes and are part of wave 2. These PSUs will be entirely imputed for the first year of the new area design. The appendix indicates the respective wave during which each new PSU will enter the index.

| PSU name | PSU definition (state and county) | Stratum population | Percent of index population | |

|---|---|---|---|---|

| Region 1—Northeast, Division 1—New England | ||||

| S11A

| Boston–Cambridge–Newton, MA–NH | MA: Essex, Middlesex, Norfolk, Plymouth, Suffolk | 4,552,402 | 1.57 |

| NH: Rockingham, Strafford | ||||

| N11B | Hartford–West Hartford–East Hartford, CT | CT: Hartford, Middlesex, Tolland | 5,005,793 | 1.73 |

| N11C | Springfield, MA | MA: Hampden, Hampshire | 4,233,926 | 1.46 |

| Region 1—Northeast, Division 2—Middle Atlantic | ||||

| S12A

| New York–Newark–Jersey City, NY–NJ–PA | NJ: Bergen, Essex, Hudson, Hunterdon, Middlesex, Monmouth, Morris, Ocean, Passaic, Somerset, Sussex, Union | 19,567,410 | 6.76 |

| NY: Bronx, Dutchess, Kings, Nassau, New York, Orange, Putnam, Queens, Richmond, Rockland, Suffolk, Westchester | ||||

| PA: Pike | ||||

| S12B

| Philadelphia–Camden–Wilmington, PA–NJ–DE–MD | DE: New Castle | 5,965,343 | 2.06 |

| MD: Cecil | ||||

| NJ: Burlington, Camden, Gloucester, Salem | ||||

| PA: Bucks, Chester, Delaware, Montgomery, Philadelphia | ||||

| N12C | Pittsburgh, PA | PA: Allegheny, Armstrong, Beaver, Butler, Fayette, Washington, Westmoreland | 4,065,877 | 1.40 |

| N12D | Buffalo–Cheektowaga–Niagara Falls, NY | NY: Erie, Niagara | 3,483,174 | 1.20 |

| N12E | W1Rochester, NY | NY: Livingston, Monroe, Ontario, Orleans, Wayne, Yates | 3,925,318 | 1.36 |

| N12F | Reading, PA | PA: Berks | 3,562,332 | 1.23 |

| Region 2—Midwest, Division 3—East North Central | ||||

| S23A

| Chicago–Naperville–Elgin, IL–IN–WI | IL: Cook, De Kalb, Du Page, Grundy, Kane, Kendall, Lake, McHenry, Will | 9,461,105 | 3.27 |

| IN: Jasper, Lake, Newton, Porter | ||||

| WI: Kenosha | ||||

| S23B | Detroit–Warren–Dearborn, MI | MI: Lapeer, Livingston, Macomb, Oakland, St. Clair, Wayne | 4,296,250 | 1.48 |

| N23C

| Cincinnati, OH–KY–IN | IN: Dearborn, Ohio, Union | 3,395,853 | 1.17 |

| KY: Boone, Bracken, Campbell, Gallatin, Grant, Kenton, Pendleton | ||||

| OH: Brown, Butler, Clermont, Hamilton, Warren | ||||

| N23D | Cleveland–Elyria, OH | OH: Cuyahoga, Geauga, Lake, Lorain, Medina | 3,257,953 | 1.12 |

| N23E | Columbus, OH | OH: Delaware, Fairfield, Franklin, Hocking, Licking, Madison, Morrow, Perry, Pickaway, Union | 3,758,510 | 1.30 |

| N23F | Milwaukee–Waukesha–West Allis, WI | WI: Milwaukee, Ozaukee, Washington, Waukesha | 3,256,494 | 1.12 |

| N23G | Dayton, OH | OH: Greene, Miami, Montgomery | 3,924,320 | 1.36 |

| N23H | W1Flint, MI | MI: Genesee | 3,911,189 | 1.35 |

| N23I | W2Janesville–Beloit, WI | WI: Rock | 3,745,126 | 1.29 |

| N23J | W3Frankfort, IN | IN: Clinton | 3,427,365 | 1.18 |

| Region 2—Midwest, Division 4—West North Central | ||||

| S24A

| Minneapolis–St. Paul–Bloomington, MN–WI | MN: Anoka, Carver, Chisago, Dakota, Hennepin, Isanti, Le Sueur, Mille Lacs, Ramsey, Scott, Sherburne, Sibley, Washington, Wright | 3,348,859 | 1.16 |

| WI: Pierce, St. Croix | ||||

| S24B

| St. Louis, MO–IL | IL: Bond, Calhoun, Clinton, Jersey, Macoupin, Madison, Monroe, St. Clair | 2,787,701 | .96 |

| MO: Franklin, Jefferson, Lincoln, St. Charles, St. Louis, St. Louis City, Warren | ||||

| N24C

| W2Omaha–Council Bluffs, NE–IA | IA: Harrison, Mills, Pottawattamie | 2,974,017 | 1.03 |

| NE: Cass, Douglas, Sarpy, Saunders, Washington | ||||

| N24D | W2Wichita, KS | KS: Butler, Harvey, Kingman, Sedgwick, Sumner | 2,842,770 | .98 |

| N24E | Lincoln, NE | NE: Lancaster, Seward | 3,288,318 | 1.14 |

| N24F

| W3Wahpeton, ND–MN | MN: Wilkin | 2,947,903 | 1.02 |

| ND: Richland | ||||

| Region 3—South, Division 5—South Atlantic | ||||

| S35A

| Washington–Arlington–Alexandria, DC–VA–MD–WV | DC: District of Columbia | 5,636,232 | 1.95 |

| MD: Calvert, Charles, Frederick, Montgomery, Prince George’s | ||||

| VA: Alexandria City, Arlington, Clarke, Culpeper, Fairfax, Fairfax City, Falls Church City, Fauquier, Fredericksburg City, Loudoun, Manassas City, Manassas Park City, Prince William, Rappahannock, Spotsylvania, Stafford, Warren | ||||

| WV: Jefferson | ||||

| S35B | Miami–Fort Lauderdale–West Palm Beach, FL | FL: Broward, Miami–Dade, Palm Beach | 5,564,635 | 1.92 |

| S35C | Atlanta–Sandy Springs–Roswell, GA | GA: Barrow, Bartow, Butts, Carroll, Cherokee, Clayton, Cobb, Coweta, Dawson, DeKalb, Douglas, Fayette, Forsyth, Fulton, Gwinnett, Haralson, Heard, Henry, Jasper, Lamar, Meriwether, Morgan, Newton, Paulding, Pickens, Pike, Rockdale, Spalding, Walton | 5,286,728 | 1.83 |

| S35D | Tampa–St. Petersburg–Clearwater, FL | FL: Hernando, Hillsborough, Pasco, Pinellas | 2,783,243 | .96 |

| S35E | Baltimore–Columbia–Towson, MD | MD: Anne Arundel, Baltimore, Baltimore City, Carroll, Harford, Howard, Queen Anne’s | 2,710,489 | .94 |

| N35F

| W3Charlotte–Concord–Gastonia, NC–SC | NC: Cabarrus, Gaston, Iredell, Lincoln, Mecklenburg, Rowan, Union | 3,035,149 | 1.05 |

| SC: Chester, Lancaster, York | ||||

| N35G | W1Orlando–Kissimmee–Sanford, FL | FL: Lake, Orange, Osceola, Seminole | 2,642,941 | .91 |

| N35H | Richmond, VA | VA: Amelia, Caroline, Charles City, Chesterfield, Colonial Heights City, Dinwiddie, Goochland, Hanover, Henrico, Hopewell City, King William, New Kent, Petersburg City, Powhatan, Prince George, Richmond City, Sussex | 3,027,856 | 1.05 |

| N35I | Raleigh, NC | NC: Franklin, Johnston, Wake | 2,549,176 | .88 |

| N35J | Greenville–Anderson–Mauldin, SC | SC: Anderson, Greenville, Laurens, Pickens | 3,094,518 | 1.07 |

| N35K | W3Winston–Salem, NC | NC: Davidson, Davie, Forsyth, Stokes, Yadkin | 2,637,083 | .91 |

| N35L | Cape Coral–Fort Myers, FL | FL: Lee | 3,091,153 | 1.07 |

| N35M | Ocala, FL | FL: Marion | 2,568,744 | .89 |

| N35N | Gainesville, FL | FL: Alachua, Gilchrist | 2,913,140 | 1.01 |

| N35O | W2Wilmington, NC | NC: New Hanover, Pender | 2,736,321 | .94 |

| N35P | W2Jacksonville, NC | NC: Onslow | 3,100,604 | 1.07 |

| N35Q | W1Clarksburg, WV | WV: Doddridge, Harrison, Taylor | 2,563,098 | .89 |

| Region 3—South, Division 6—East South Central | ||||

| N36A

| W4Louisville/Jefferson County, KY–IN | IN: Clark, Floyd, Harrison, Scott, Washington | 2,529,624 | .87 |

| KY: Bullitt, Henry, Jefferson, Oldham, Shelby, Spencer, Trimble | ||||

| N36B | Birmingham–Hoover, AL | AL: Bibb, Blount, Chilton, Jefferson, Shelby, St. Clair, Walker | 2,483,606 | .86 |

| N36C | Chattanooga, TN–GA | GA: Catoosa, Dade, Walker | 2,620,595 | .90 |

|

| TN: Hamilton, Marion, Sequatchie | |||

| N36D | W4Huntsville, AL | AL: Limestone, Madison | 2,801,399 | .97 |

| N36E | Florence–Muscle Shoals, AL | AL: Colbert, Lauderdale | 2,550,408 | .88 |

| N36F | W1Meridian, MS | MS: Clarke, Kemper, Lauderdale | 2,397,313 | .83 |

| Region 3—South, Division 7—West South Central | ||||

| S37A | Dallas–Fort Worth–Arlington, TX | TX: Collin, Dallas, Denton, Ellis, Hood, Hunt, Johnson, Kaufman, Parker, Rockwall, Somervell, Tarrant, Wise | 6,426,214 | 2.22 |

| S37B | Houston–The Woodlands–Sugar Land, TX | TX: Austin, Brazoria, Chambers, Fort Bend, Galveston, Harris, Liberty, Montgomery, Waller | 5,920,416 | 2.04 |

| N37C | San Antonio–New Braunfels, TX | TX: Atascosa, Bandera, Bexar, Comal, Guadalupe, Kendall, Medina, Wilson | 2,436,095 | .84 |

| N37D | Oklahoma City, OK | OK: Canadian, Cleveland, Grady, Lincoln, Logan, McClain, Oklahoma | 2,812,948 | .97 |

| N37E | Baton Rouge, LA | LA: Ascension, East Baton Rouge, East Feliciana, Iberville, Livingston, Pointe Coupee, St. Helena, West Baton Rouge, West Feliciana | 2,543,610 | .88 |

| N37F | Lafayette, LA | LA: Acadia, Iberia, Lafayette, St. Martin, Vermilion | 2,444,837 | .84 |

| N37G | Brownsville–Harlingen, TX | TX: Cameron | 2,581,037 | .89 |

| N37H | Amarillo, TX | TX: Armstrong, Carson, Oldham, Potter, Randall | 2,756,117 | .95 |

| N37I | W2Russellville, AR | AR: Pope, Yell | 2,620,998 | .91 |

| N37J | W3Paris, TX | TX: Lamar | 2,851,943 | .98 |

| Region 4—West, Division 8—Mountain | ||||

| S48A | Phoenix–Mesa–Scottsdale, AZ | AZ: Maricopa, Pinal | 4,192,887 | 1.45 |

| S48B | Denver–Aurora–Lakewood, CO | CO: Adams, Arapahoe, Broomfield, Clear Creek, Denver, Douglas, Elbert, Gilpin, Jefferson, Park | 2,543,482 | .88 |

| N48C | Las Vegas–Henderson–Paradise, NV | NV: Clark | 3,227,960 | 1.11 |

| N48D | Provo–Orem, UT | UT: Juab, Utah | 3,724,271 | 1.29 |

| N48E | Yuma, AZ | AZ: Yuma | 3,840,701 | 1.33 |

| N48F | W3St. George, UT | UT: Washington | 3,206,759 | 1.11 |

| Region 4—West, Division 9—Pacific | ||||

| S49A | Los Angeles–Long Beach–Anaheim, CA | CA: Los Angeles, Orange | 12,828,837 | 4.43 |

| S49B | San Francisco–Oakland–Hayward, CA | CA: Alameda, Contra Costa, Marin, San Francisco, San Mateo | 4,335,391 | 1.50 |

| S49C | Riverside–San Bernardino–Ontario, CA | CA: Riverside, San Bernardino | 4,224,851 | 1.46 |

| S49D | Seattle–Tacoma–Bellevue, WA | WA: King, Pierce, Snohomish | 3,439,809 | 1.19 |

| S49E | San Diego–Carlsbad, CA | CA: San Diego | 3,095,313 | 1.07 |

| S49F | Honolulu, HI | HI: Honolulu | 1,360,301 | .47 |

| S49G | Anchorage, AK | AK: Anchorage, Matanuska–Susitna | 523,154 | .18 |

| N49H

| Portland–Vancouver–Hillsboro, OR–WA | OR: Clackamas, Columbia, Multnomah, Washington, Yamhill | 5,208,366 | 1.80 |

| WA: Clark, Skamania | ||||

| N49I | W1Santa Rosa, CA | CA: Sonoma | 5,163,670 | 1.78 |

| N49J | Chico, CA | CA: Butte | 4,623,339 | 1.60 |

| N49K | W4Moses Lake, WA | WA: Grant | 4,363,676 | 1.51 |

| Notes: (1) PSU code (1st character: S—self–representing or N—nonself–representing; 2nd character: region number; 3rd character: division number; 4th character: A–Q, depending on number of PSUs within a Census division). Note: The superscripts W1–W4 designate the respective wave during which each new PSU will enter the index; no designation indicates a continuing PSU. Source: U.S. Bureau of Labor Statistics. | ||||

Steven P. Paben, William H. Johnson, and John F. Schilp, "The 2018 revision of the Consumer Price Index geographic sample," Monthly Labor Review, U.S. Bureau of Labor Statistics, October 2016, https://doi.org/10.21916/mlr.2016.47

1 Because of resource constraints, the U.S. Bureau of Labor Statistics (BLS) did not rotate the 2008 geographic sample, which was based on the 2000 decennial census.

2 The continuous rotation plan for geographic areas includes the following CPI component surveys: the Housing Survey, the Telephone Point-of-Purchase Survey, and the Commodity and Services survey. The latter two surveys moved to 4-year within-PSU rotation cycles as part of the 1998 CPI revision. In 2010, CPI began a multiyear effort to continually update the rent sample for the Housing Survey. (See Frank Ptacek, “Updating the rent sample for the CPI Housing Survey,” Monthly Labor Review, August 2013, https://www.bls.gov/opub/mlr/2013/article/updating-the-rent-sample-for-the-cpi-housing-survey.htm.) In January 2015, the Consumer Expenditure Survey (CE) switched to a geographic sample design based on the 2010 decennial census.

3 Janet L. Williams, Eugene F. Brown, and Gary R. Zion, “The challenge of redesigning the Consumer Price Index area sample,” Proceedings of the Survey Research Methods Section, vol. 1 (American Statistical Association, 1993), pp. 200–205.

4 Sample allocation divides the total sample into smaller samples for each classification variable.

5 Because of a lack of population, PSUs were not selected for the Northeast region’s urban nonmetropolitan index areas (size class D100) used in the 1998 area design.

6 Revised delineations of metropolitan statistical areas, micropolitan statistical areas, and combined statistical areas, and guidance on uses of the delineations of these areas, Bulletin No. 13-01 (Office of Management and Budget, February 28, 2013), https://obamawhitehouse.archives.gov/sites/default/files/omb/bulletins/2013/b-13-01.pdf.

7 Ralph Bradley, “Analytical bias reduction for small samples in the U.S. Consumer Price Index,” Journal of Business & Economic Statistics, vol. 25, no. 3, 2007, pp. 337–346.

8 Ibid.

9 A self-representing area represents only its own area definition. A nonself-representing area stands for multiple area definitions.

10 John F. Schilp, “Simulated statistics for the proposed by-division design in the Consumer Price Index,” Proceedings of the Government Statistics Section (American Statistical Association, 2014).

11 The 1998 area design, used currently, includes seven “Census region by size class” groups for the nonself-representing areas, because it has no sample allocated to the C-sized areas in the Northeast region.

12 Under the 2018 area design, the total population residing in each county included in a CBSA, both metropolitan and micropolitan, is defined as urban. Under the 1998 design, the total population in A- and B-sized PSUs was defined as urban, but only the population residing within the political boundaries of an “urban core” in the C-sized PSUs was defined as urban. The population outside of an urban core, but inside a county defining a C-sized PSU, was considered rural under the 1998 design.

13 The CE additionally covers rural areas. These areas are out of scope for the CPI.

14 The original design based on the 2000 decennial census had 86 urban PSUs for the CE and CPI surveys. CPI did not receive funding for its initiative in time to make the switch to that design. The CE program did implement the design, but then had to cut 11 PSUs because of insufficient funding in 2006.

15 The variance models are for 6-month percent-change standard errors, because the main purpose of the models is to allocate outlets and items to areas where the samples are rotated every 6 months.

16 An overview of the ACS data products can be found at https://www.Census.gov/programs-surveys/acs/.

17 The 3-year product was discontinued in 2015.

18 Education level for those age 25 and over.

19 A price relative is the ratio of an item’s current-period price to its previous-period price.

20 Median household income and median property value were derived from 2010 5-year ACS estimates for the final stratification model.

21 H. P. Friedman and J. Rubin, “On some invariant criteria for grouping data,” Journal of the American Statistical Association, vol. 62, 1967, pp. 1159–1178.

22 Susan L. King, John Schilp, and Erik Bergman, “Assigning PSUs to a stratification PSU,” Proceedings of the Survey Research Methods Section (American Statistical Association, 2011), pp. 2235–2246.

23 William H. Johnson, Steven P. Paben, John F. Schilp, “The use of sample overlap methods in the Consumer Price Index redesign,” Proceedings of the Fourth International Conference of Establishment Surveys, June 11–14, 2012, Montréal, Canada (American Statistical Association, 2012).

24 See Walter M. Perkins, “1970 CPS redesign: proposed method for deriving sample PSU selection probabilities within 1970 NSR strata,” memorandum to Joseph Waksberg (U.S. Bureau of the Census, August 5, 1970); and Lawrence R. Ernst, “Maximizing the overlap between surveys when information is incomplete,” European Journal of Operational Research, vol. 27, no. 2, 1986, pp. 192–200.

25 SOCSLP is written in SAS by Sun Wong Kim, Steven G. Herringa, and Peter W. Solenberger of the University of Michigan. It should remain useable in the future, because SAS will continue to be supported at BLS. For details on the methodology used in SOCSLP, see Kim, Herringa, and Solenberger, “Optimizing solution sets in two-way controlled selection problems” (Institute for Social Research, University of Michigan), ftp://ftp.isr.umich.edu/pub/src/smp/socslp/socslp_paper.pdf.

26 Cincinnati, Cleveland, Milwaukee, Pittsburgh, and Portland were reselected as nonself-representing areas.